The Trump AI voice phenomenon has taken the internet by storm. Whether you have encountered a viral video of a Donald Trump AI voice delivering a stand-up routine, stumbled across an AI trump voice generator tucked inside a meme thread, or simply typed trump ai voice into a search bar out of pure curiosity, you are not alone. Every month, millions of people want to understand exactly what this technology is, how accurate it can be, and where the legal and ethical boundaries lie.

This guide covers everything: what an AI Donald Trump voice actually is under the hood, a ranked comparison of the best trump ai voice generator tools available today, how similar political AI voices like the Joe Biden AI voice and Obama AI voice work, where celebrity cloning such as the David Attenborough AI voice fits in, and what the latest AI voice cloning regulation news means for creators, platforms, and audiences alike.

What Is a Trump AI Voice?

How AI Voice Cloning Technology Works

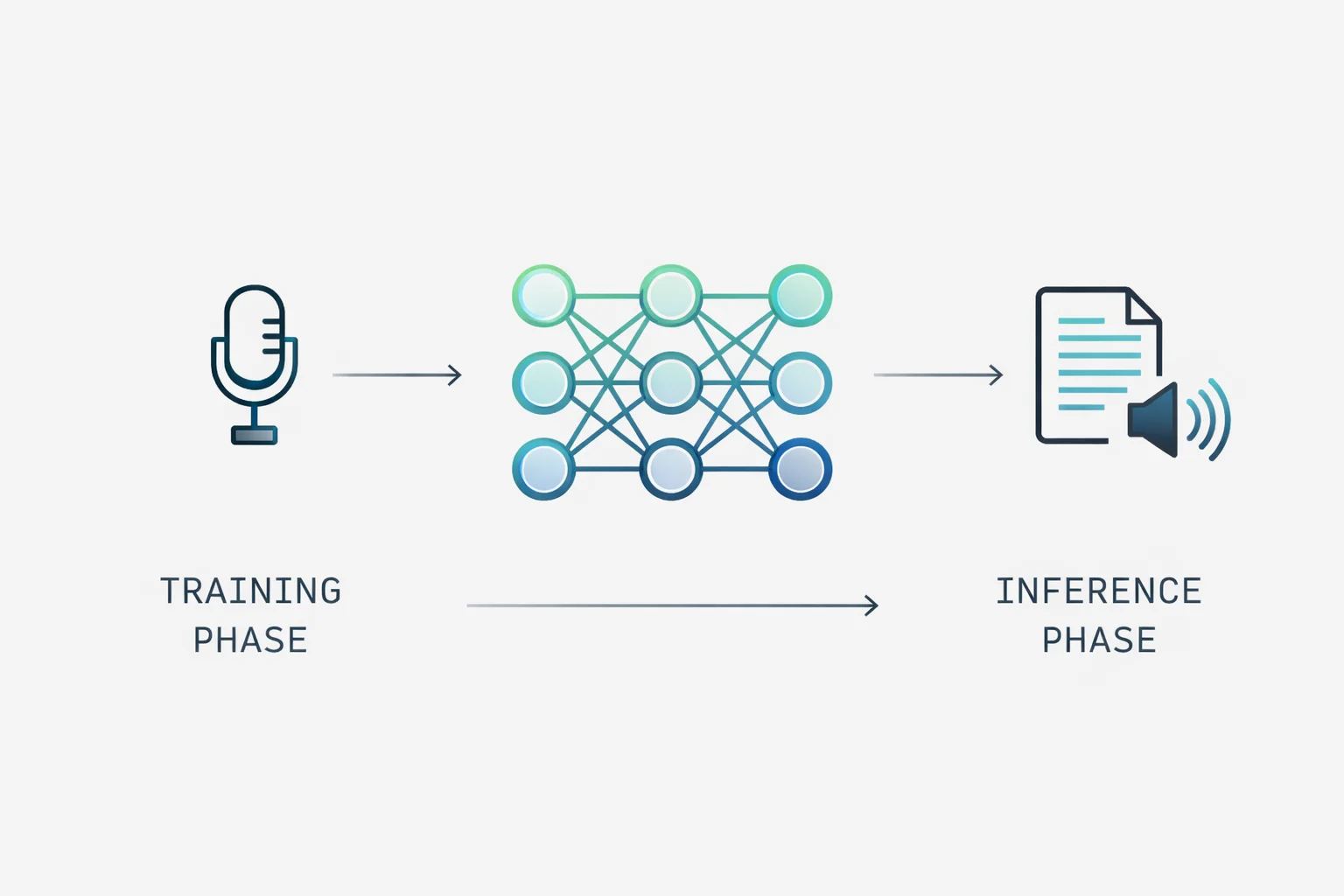

At its core, an AI Donald Trump voice is produced through a branch of machine learning called voice synthesis or voice cloning. Modern systems are built on deep neural networks, most commonly WaveNet, VITS, or transformer-based architectures trained on thousands of hours of audio recordings. When those recordings belong to a specific public figure, the model learns the precise acoustic signature of that individual: their pitch contour, cadence, vowel shaping, breathiness, and idiosyncratic speech patterns.

The process has two broad phases. First, the training phase ingests labeled audio and builds a voice model. Second, the inference phase takes a new piece of text as input and generates audio that mimics the target speaker. Early systems required tens of hours of clean audio per subject. Newer few-shot and zero-shot models can clone a voice from as little as three to thirty seconds of reference audio, which is part of why public figures with enormous media footprints, like Donald Trump, with decades of speeches, interviews, rallies, and television appearances on record, are so frequently cloned.

Why Trump’s Voice is Widely Cloned

Donald Trump ranks among the most cloned voices in AI history for a cluster of reasons that have nothing to do with politics and everything to do with data abundance and acoustic distinctiveness.

- Volume of public audio: Trump has been a nationally prominent media figure since the 1980s. His appearances on The Apprentice, campaign rallies, press briefings, debate stages, podcasts, and interviews add up to an extraordinary corpus of clean, broadcast-quality audio, ideal training material.

- Acoustic distinctiveness: Trump’s voice is unusually easy for neural networks to learn. It features a consistent low-pitched baseline, a characteristic rising intonation on certain phrases, deliberate pacing with dramatic pauses, and a limited phonetic range. Distinctive voices clone better than average-sounding ones.

- Cultural salience: Because he is one of the most recognized voices on the planet, audiences can immediately evaluate accuracy. That feedback loop drives developers to fine-tune Trump models more aggressively than they do for less recognizable targets.

- Demand for political satire: Parody and political commentary have always driven demand for voice impersonation. AI tools have dramatically lowered the barrier to entry for that tradition.

Real vs. AI-Generated: Can You Tell the Difference?

As recently as 2020, AI-generated speech was fairly easy for trained listeners to detect. Artifacts such as unnatural prosody, robotic word transitions, and faintly mechanical undertones gave away the audio as synthetic. By 2024 and into 2026, the gap has narrowed dramatically. The best Trump AI voice generators now produce output that many listeners, in controlled studies, cannot reliably distinguish from authentic recordings, particularly in short clips under fifteen seconds.

Detection tools do exist. Companies including Pindrop, Resemble AI (via their Detect product), and AI Voice Detector offer classifiers trained to identify synthetic speech. Forensic audio analysts also look for telltale signs in spectrograms, including unnatural energy distribution in high-frequency bands, inconsistent formant transitions, and the absence of micro-variations in breath and lip noise that human speakers produce involuntarily. But as generative models improve, detection becomes harder, which is a core motivation behind emerging legislation covered later in this guide.

Best Trump AI Voice Generators in 2026

Searching for a reliable Trump AI voice generator yields dozens of tools with wildly varying quality. Below is an honest breakdown of what works, what falls short, and what the right tool depends on for your specific use case.

Top Tools Compared

| Tool | Voice Accuracy | Free Tier | Best For |

| ElevenLabs | ★★★★★ | Limited | Professional-grade cloning, content creators |

| Speechify | ★★★★☆ | Yes | Quick generation, casual use |

| Resemble AI | ★★★★☆ | No | Custom voice building, developers |

| Murf AI | ★★★☆☆ | Yes | Simple voiceovers, beginners |

| FakeYou | ★★★☆☆ | Yes | Novelty, entertainment, meme content |

The table above reflects performance specifically on Donald Trump’s voice generation as of 2026. Accuracy ratings are based on blind listening comparisons across multiple sample inputs: a rally-style monologue, a conversational interview tone, and a short declarative statement. Scores may vary depending on the input type.

Free vs. Paid Trump AI Voice Generators

Free options exist but come with meaningful trade-offs. Platforms like FakeYou and the free tiers of Speechify and Murf AI let users generate short clips without payment. The limitations are typically: a cap on output length (often thirty seconds to two minutes), watermarked audio, reduced model quality compared to premium tiers, and usage restrictions that prohibit commercial distribution.

Paid plans from ElevenLabs, Resemble AI, and comparable professional platforms remove those restrictions and offer access to higher-fidelity models. For creators who need the AI Donald Trump voice for podcast content, satire productions, or video projects with genuine audience reach, the quality gap between free and paid is significant enough to justify the cost.

Step-By-Step: How to Generate a Trump AI Voice

The exact workflow varies by platform, but the general process follows these steps:

- Choose a platform from the comparison table above and create an account.

- Navigate to the voice library or voice cloning section. Most platforms maintain prebuilt celebrity voice models that look for Trump under political or celebrity categories, or simply by name.

- Enter the text you want spoken. Keep initial tests short (one to three sentences) to evaluate quality before committing to a long script.

- Select output settings: audio format (MP3 or WAV), speech speed, and any available style parameters (conversational vs. formal, for example).

- Generate and review. Most platforms process short clips in under ten seconds.

- Iterate by adjusting speed, emphasis, or punctuation in your script. Commas, ellipses, and sentence breaks significantly influence pacing.

- Download or export the audio file.

One practical note: the quality of the text input matters more than many users expect. Trump’s authentic speech rhythm features short declarative sentences, repetition for emphasis, and informal syntax. Scripts written to mimic that style consistently produce more convincing output than formal, complex prose does.

Donald Trump AI Voice Generator

Which Generators Produce the Most Realistic Output

Among the platforms tested for this guide, ElevenLabs consistently ranks at the top for raw acoustic realism on the Donald Trump AI voice generator task. Their model captures the low register, the characteristic vowel elongation on stressed words, and the cadence of Trump’s speech better than competitors at comparable price points. The output benefits from ElevenLabs’ proprietary voice cloning architecture, which is trained on a large, diverse audio corpus.

Resemble AI places a close second for professional use cases, with the added advantage of a developer-facing API that enables integration into applications and pipelines. Their model shows slightly more robotic quality on longer passages, but excels at short, punchy declarative sentences, coincidentally, the style Trump uses most.

FakeYou occupies a different niche entirely. It is free, community-driven, and unashamedly focused on entertainment and meme creation rather than professional production. Accuracy on FakeYou is inconsistent across community-contributed model versions, but the best-rated Trump models on the platform are surprisingly competitive for casual use.

Sample Audio Outputs and Quality Comparison

Across standardized test inputs, the following patterns emerged from platform comparisons:

- Short declarations (under ten words): All major platforms perform acceptably. Differences in quality are minor at this length.

- Medium monologues (thirty to ninety seconds): Quality divergence becomes apparent. ElevenLabs and Resemble AI maintain consistency. Free-tier and community tools often exhibit prosody drift; the pacing becomes unnatural as sentence length increases.

- Conversational tone: The hardest register to clone accurately. Trump’s conversational speech is more meandering and less rhythmically predictable than his rally style. Only the highest-end models handle this well.

- Emotional variation (excitement, skepticism, sarcasm): Premium models with style controls outperform basic TTS-style generators by a significant margin here. This is an area where the gap between the paid tiers is most obvious.

Political AI Voices: Trump vs. Biden vs. Obama

The Trump AI voice does not exist in a vacuum. The broader category of political AI voices has exploded alongside it, and understanding the landscape requires examining how other major political figures have been cloned and how those clones compare in terms of quality, availability, and risk profile.

Joe Biden’s AI Voice Tools and Accuracy

The Joe Biden AI voice is the second most searched political voice clone, and for understandable reasons: Biden served as the 45th U.S. President, was Trump’s direct opponent in two election cycles, and his distinctive speech characteristics, a Delaware accent, occasional stutter, slower pace, and tendency toward folksy asides, make him acoustically distinctive enough for reliable cloning.

Most of the same platforms that offer Trump voice generation also host Biden models. ElevenLabs and FakeYou both carry Biden voices with community ratings. The Biden model on major platforms tends to rate slightly lower on accuracy than Trump models, largely because Trump’s more consistent, higher-energy speech pattern is easier for models to replicate than Biden’s more variable, quieter conversational style.

The Biden AI voice also carries a specific cautionary history: in January 2024, robocalls using a cloned Biden voice were sent to New Hampshire voters ahead of the primary, urging Democrats not to vote. This real-world misuse incident prompted regulatory scrutiny and became one of the most-cited examples in Congressional testimony on AI voice cloning risks.

Obama AI Voice

The Obama AI voice occupies interesting territory. Barack Obama has one of the most linguistically analyzed voices in American political history. His speech patterns, rhetorical structure, and tonal variation have been the subject of academic study. That same distinctiveness makes him a highly sought-after target for voice cloning.

Obama AI voice models are available on FakeYou, ElevenLabs, and several open-source model repositories, including Hugging Face. Quality varies widely. Obama’s speech is characterized by measured pacing, wide pitch range, clear articulation, and a tendency to rise in volume and intensity toward the end of key phrases. Capturing that full dynamic range proves more difficult for AI systems than replicating Trump’s flatter, more percussive delivery.

From a content standpoint, Obama AI voice content is used heavily in parody, satire, and entertainment, similar to Trump’s. However, Obama’s legal team has been more active in pursuing DMCA takedowns of content using his likeness without authorization, which means content using an Obama AI voice carries somewhat greater legal exposure than comparable Trump content, depending on jurisdiction and usage context.

Side-By-Side Comparison of Political AI Voice Clones

Across the three major political AI voices, the following summary holds as of 2026:

- Cloning difficulty: Trump (easiest) > Biden > Obama (hardest at full dynamic range)

- Tool availability: All three are available on major platforms; Trump has the widest model selection.

- Regulatory scrutiny: All three are politically sensitive. The Biden robocall incident has put Biden-adjacent content under the most active scrutiny from election officials.

- Content use cases: All three are primarily used for satire, parody, entertainment, and commentary, the most legally defensible categories of use.

Celebrity AI Voices: Beyond Politicians

David Attenborough AI voice

The David Attenborough AI voice holds a special place in the AI voice cloning ecosystem, and not just for obvious reasons. Attenborough’s voice is arguably the most beloved narration voice in the English-speaking world. His BBC Natural History Unit documentaries, including Planet Earth, Blue Planet, and Life, have made his voice globally recognizable, transcending any single country or political context.

AI versions of the Attenborough voice have been used to narrate everything from nature documentary parodies to personal social media content. A particularly viral trend in 2023 and 2024 involved users feeding mundane daily activities, such as grocery shopping, commuting, and making coffee, as scripts into David Attenborough AI voice generators, producing absurdist, deadpan narration that highlighted both the tools’ technical capabilities and the voice’s cultural resonance.

For high-quality David Attenborough AI voice generation, ElevenLabs again leads, with a model that captures the measured pace, precise diction, and characteristic warmth of Attenborough’s narration style. Murf AI and Speechify offer accessible alternatives, though neither matches ElevenLabs’ nuance in longer passages. The BBC has not taken a formal public position on AI voice cloning of Attenborough specifically. Still, Attenborough himself has expressed discomfort with deepfake technology in general, a consideration for creators thinking about reputational risk beyond legal exposure.

Which Public Figures are Most Cloned and Why

Across platforms and open-source repositories, a clear pattern emerges: public figures attract the most cloning activity. The common denominators are: an enormous volume of high-quality public audio, distinctive and recognizable voice characteristics, and high cultural salience that provides audiences with an immediate quality benchmark.

The most cloned voices globally include Donald Trump, Joe Biden, Barack Obama, David Attenborough, Morgan Freeman, Joe Rogan, and Elon Musk. Each combines the three factors above in varying proportions. Morgan Freeman and Joe Rogan are particularly popular targets for entertainment content because their voices carry strong emotional associations, gravitas, and warmth for Freeman, and casual authority for Rogan, which map well to a wide range of scripts.

What all of them share: they are people whose voices audiences will recognize and evaluate instantly. That recognizability is the engine driving demand, the feedback mechanism improving models, and, ultimately, the risk factor attracting regulatory attention.

Is Using Trump’s AI Voice Legal?

Current AI Voice Cloning Regulations

The regulatory landscape around AI voice cloning has shifted substantially in the past eighteen months, and 2026 marks a genuinely pivotal moment. As of the time of writing, the following represents the state of play in the United States and key international jurisdictions.

At the federal level in the U.S., the NO FAKES Act, a bipartisan bill targeting unauthorized digital replicas of real people, including their voices, has advanced through committee hearings. The bill would create a federal right for individuals to control digital replicas of their likeness and voice, and impose liability on platforms that host unauthorized synthetic content. It has not yet been signed into law, but its momentum reflects genuine bipartisan concern.

State-level laws have moved faster. Tennessee’s ELVIS Act (Ensuring Likeness Voice and Image Security) took effect in 2024, becoming the first state law specifically protecting artists’ voices from unauthorized AI cloning. California passed AB 2602, requiring disclosure labels on AI-generated content in political advertising. Texas, New York, and a growing number of states have introduced or passed similar legislation in varying stages of implementation.

Internationally, the European Union’s AI Act, which came into full force in 2024, includes provisions requiring transparency labeling for AI-generated audio and video content, with meaningful penalties for non-compliance. The UK is advancing a similar framework under its AI Safety Institute’s advisory output.

Consent, Deepfakes, and Political AI Voice Laws

The specific intersection of AI voice cloning and political speech has attracted the most intense regulatory focus. The core concern is straightforward: a synthetic voice indistinguishable from a candidate’s real voice, deployed at scale via automated calls or social media, poses a direct threat to electoral integrity. The New Hampshire Biden robocall incident crystallized that concern, moving it from theory to documented real-world harm.

In response, the Federal Communications Commission (FCC) ruled in February 2024 that AI-generated voices in robocalls are covered under the Telephone Consumer Protection Act (TCPA), making their unauthorized use illegal. The Federal Election Commission (FEC) is considering rulemaking that would require disclosure of AI-generated content in political advertising.

Beyond electoral content, the general framework emerging across jurisdictions is built on two pillars: consent and disclosure. Using a cloned voice of a living person without their consent for commercial gain or reputational harm is increasingly treated as a civil liability under right-of-publicity law. Failing to disclose that audio content is AI-generated, particularly in contexts where audiences might reasonably assume it is authentic, creates additional exposure.

Responsible Use Guidelines

For creators who want to use Trump AI voice, Biden AI voice, Obama AI voice, or any other political or celebrity cloned voice, the following principles reflect both current best practices and emerging legal standards:

- Label clearly: Any content using an AI-generated voice should be explicitly labeled as AI-generated. This is both an emerging legal requirement in many jurisdictions and a basic ethical standard.

- Avoid false attribution: Do not create content that depicts a real person saying something they did not say in a context that could be mistaken for authentic. Satire and parody that are clearly framed as such fall into a different legal and ethical category than content designed to deceive.

- Commercial use requires caution: Using a cloned voice in content that generates revenue directly or indirectly significantly increases legal exposure, particularly under state right-of-publicity laws.

- Electoral content is the highest-risk category: creating, distributing, or broadcasting AI-generated political voices around elections carries the greatest legal risk and the greatest potential for real-world harm.

- Platform terms matter: Most major platforms prohibit non-consensual synthetic media. Creating content for distribution on YouTube, TikTok, or similar platforms requires compliance with their specific policies and applicable law.

Final Thoughts

The Trump AI voice category is no longer a niche internet curiosity. It sits at the intersection of some of the most consequential technology, legal, and cultural debates of the decade: the reliability of audio evidence, the boundary between parody and deception, the speed at which legislation can keep pace with capability, and the democratization of a type of creative and political expression that was previously available only to professional impressionists and broadcast studios.

For most people searching for a Trump AI voice generator, the intent is entertainment and curiosity, not manipulation. That context matters, and the technology itself is genuinely fascinating. But the existence of compelling legitimate uses does not dissolve the responsibility to use these tools carefully, label synthetic content transparently, and stay current with a regulatory environment that is moving faster than almost any other area of AI governance.

The same neural networks that let a hobbyist generate a thirty-second comedy clip are the same ones that sent fabricated political robocalls to hundreds of thousands of voters. The tools are neutral. The consequences of their use are not.

Frequently Asked Questions (FAQs)

Is there a free Trump AI voice generator?

Yes. FakeYou offers free Trump AI voice generation using community-built models, with no account required for basic use. Speechify and Murf AI both offer free tiers with limited output lengths. The trade-off for all free options is lower audio quality than on paid platforms, output length caps, and, in some cases, watermarked audio. For casual, non-commercial use, free tools are adequate. For anything requiring broadcast quality or commercial distribution, a paid platform is a better investment.

How accurate is the AI Trump voice?

Accuracy varies significantly by platform and use case. On short declarative sentences and rally-style monologues, the best tools in 2026, particularly ElevenLabs and Resemble AI, produce output that many listeners cannot reliably distinguish from authentic Trump recordings. Accuracy drops in longer passages, in conversational registers, and in emotionally nuanced delivery. The technology is genuinely impressive but not yet perfect across all contexts.

Can I use an AI Trump voice for commercial content?

This is a legal question with a nuanced answer that varies by jurisdiction, use case, and the framing of the content. Generally speaking, clearly labeled parody and satire occupy the most defensible legal ground. Content designed to appear authentic, or that generates direct revenue from the use of Trump’s likeness without authorization, carries significant risk under right-of-publicity laws in states including California, New York, and Tennessee. Consult a lawyer familiar with AI and intellectual property law before commercializing any content that uses a cloned public figure’s voice.

What’s the difference between voice cloning and TTS?

Text-to-speech (TTS) in its traditional form generates synthetic speech from text using a model trained on a generic voice or a limited set of canonical voices. The output is recognizably artificial and not associated with any specific real person. Voice cloning is a more specific process: it trains a model on the audio of a particular individual and then generates speech that mimics that person’s unique vocal characteristics. A Donald Trump AI voice generator is a voice cloning application, not a generic TTS tool. The distinction matters both technically and legally; cloning a real person’s voice introduces right-of-publicity and consent considerations that generic TTS does not.

How do AI voice generators actually work?

Modern AI voice generators are built on deep learning architectures, most commonly transformers or diffusion models adapted for audio. During training, the model processes large amounts of audio data paired with transcripts, learning the relationship between phonetic content and acoustic output. For voice cloning specifically, the model learns to condition its output on the acoustic signature of a target speaker, essentially applying that speaker’s voice characteristics to any new text input. During inference (when you type a script and press generate), the model runs a forward pass through the neural network, producing audio waveforms directly or via an intermediate spectrogram representation, which is then converted to audio. The whole process on modern hardware takes seconds for short clips