Imagine hearing Darth Vader personally narrate your voicemail greeting. Or Kanye West reading your morning news. Or SpongeBob SquarePants guiding you through a recipe step by step. Thanks to the explosive rise of AI voice technology, none of this is science fiction anymore; it’s Tuesday on the internet.

AI-generated character and celebrity voices have become one of the fastest-growing corners of the web. Millions of people search every month for their favorite fictional and real-world voices, curious whether an AI version exists, what it sounds like, and where to use it. This guide answers all those questions in one place.

Whether you’re here for Darth Vader, Goku, Kanye, SpongeBob, or the Mortal Kombat announcer, this is the only resource you need.

What Is an AI Voice Model?

Before diving into specific characters and celebrities, it helps to understand what’s actually happening when someone generates a Darth Vader AI voice or a Kanye AI voice. The technology is more accessible and more surprising than most people expect.

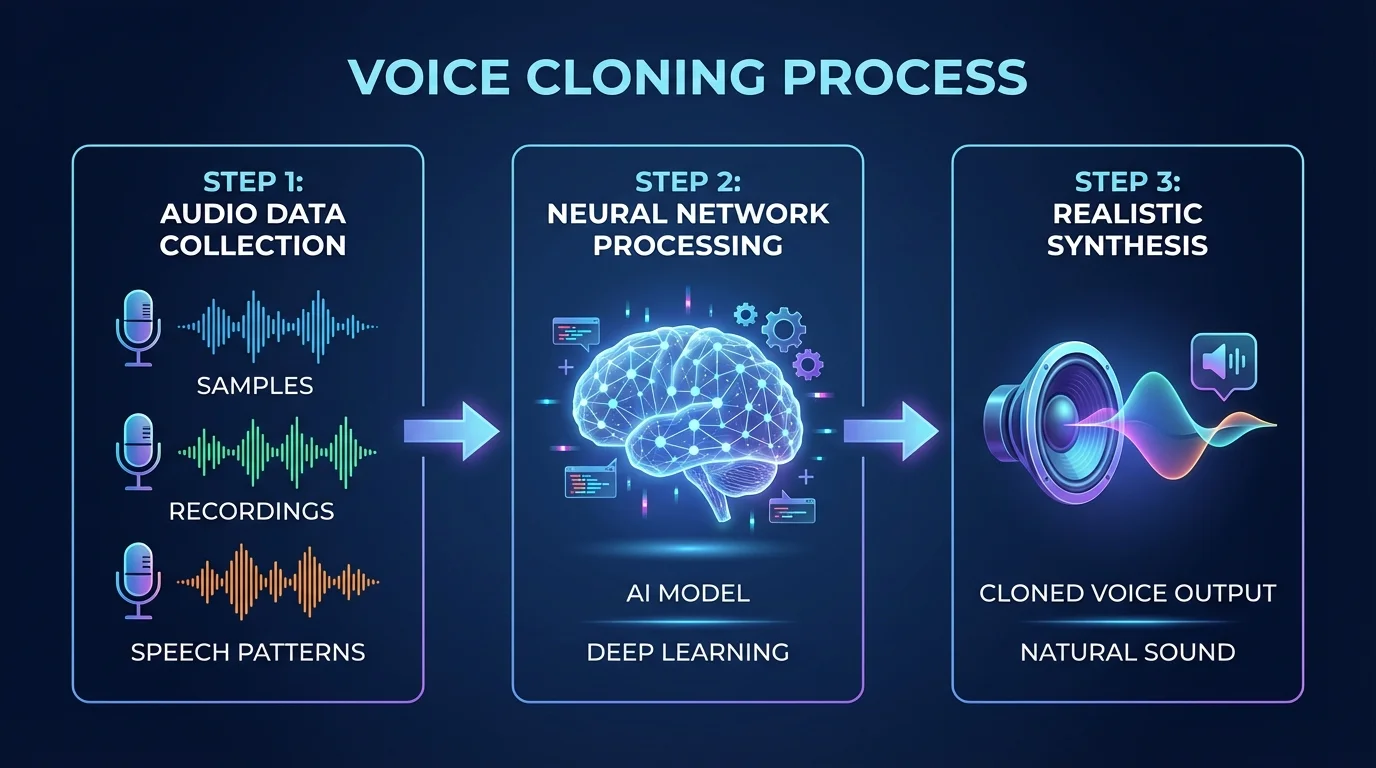

How AI Voice Cloning Technology Works

AI voice cloning is the process of training a machine learning model on recordings of a person’s (or character’s) voice, then using that model to generate new speech that sounds like the original speaker.

At its core, the system works in three stages. First, it ingests a large volume of audio samples, ideally many hours of clean recordings from the target voice. Second, the model analyzes the speaker’s unique acoustic characteristics: pitch range, cadence, resonance, breathiness, pronunciation patterns, and emotional tone. Third, it learns to reproduce those characteristics on demand, generating new speech from typed text inputs.

Modern AI voice models use neural network architectures, particularly transformer-based systems, which have improved dramatically over the past three years. Early cloning tools produced robotic, obviously artificial output. Today’s best models capture subtle qualities, such as the slight rasp in Darth Vader’s breathing cadence or the melodic rise in Hatsune Miku’s synthesized timbre.

The reason character and celebrity voices have exploded in popularity is simple: these voices carry enormous emotional weight. Hearing Darth Vader’s voice triggers decades of cinematic memory. Hearing Drake’s cadence activates associations with an entire era of music. AI allows anyone to summon those associations for creative, entertainment, or novelty purposes.

TTS vs Voice Cloning vs Real-Time Voice Changers

These three terms get used interchangeably online, but they describe meaningfully different technologies.

Text-to-Speech (TTS)

Text-to-Speech (TTS) is the broadest category. It converts typed text into spoken audio. Traditional TTS (think early GPS navigation or Windows Narrator) used rule-based synthesis, which never sounded truly human. Modern neural TTS, by contrast, produces remarkably natural-sounding speech, and many AI voice tools are built on advanced TTS foundations.

Voice Cloning

Voice cloning is more specific. It refers to training a model directly on audio samples from a specific individual (real or animated) to reproduce that individual’s unique voice signature. Voice cloning produces higher-fidelity results than generic TTS applied to character voices, because the model has actually learned from the source material. The Darth Vader AI voice models that have gained the most traction online are typically cloned rather than approximated.

Real Time Voice Changers

Real-time voice changers are a separate product category entirely. These tools transform your live voice as you speak. They’re used in gaming, streaming, and content creation to make the speaker sound like someone else in real time. Tools like Lyra sit in this category. Because real-time changers serve a distinct transactional use case, they’re not the focus of this guide, which covers the discovery and exploration of prebuilt AI voice models.

Why Character and Celebrity Voices Dominate AI Search

The answer is culture. Certain voices carry such strong associations that hearing them is inseparable from an emotional reaction. Darth Vader doesn’t just speak, he commands, he threatens, he looms. The Mortal Kombat announcer doesn’t just say words; he triggers adrenaline. Mickey Mouse doesn’t just talk, he radiates nostalgia.

When AI can reproduce those voices, the result is inherently fascinating to the millions of people who grew up with them. It raises questions about authenticity, about technology’s relationship to memory, and sometimes it’s simply hilarious; there’s something surreal about hearing SpongeBob narrate a congressional speech or Kanye voice an audiobook.

That cultural fascination is why these searches have grown so rapidly, and why a single comprehensive guide can capture traffic from dozens of different character and celebrity voice queries.

Darth Vader AI Voice — The Most Searched Character Voice Online

No AI character voice generates more search interest than Darth Vader’s. The combination of James Earl Jones’s legendary bass, the iconic breathing rhythm, and five decades of cultural weight makes Darth Vader’s voice one of the most recognizable sounds in human history. It’s no surprise that AI developers, enthusiasts, and creative teams were among the first to attempt a faithful reproduction.

How the Darth Vader AI Voice Was Created

The Darth Vader AI voice has an unusual real-world backstory that makes it more remarkable than most character voice clones. In 2022, Respawn Entertainment used AI voice synthesis to recreate James Earl Jones’s younger voice for the video game Star Wars Jedi: Survivor. The technology was developed in collaboration with Jones himself, who approved the use of his archived recordings to train the model. This was one of the first high-profile examples of a living actor explicitly consenting to the reproduction of their voice by AI, a landmark moment for the industry.

Beyond official productions, independent AI voice communities have developed their own Darth Vader voice models using publicly available recordings from the films and the expanded universe. These community-built models vary significantly in quality, but the best ones capture the key acoustic signatures: the deep resonant bass (James Earl Jones’s voice typically sits around 85–100 Hz at its foundation), the iconic vocoded breathing layer, the deliberate measured cadence, and the subtle echo effect that characterized the original film recordings.

What makes a Darth Vader AI voice convincing isn’t just pitch; it’s the synthesis of multiple acoustic layers. A model that only replicates the bass frequency misses the breathing texture. One that nails the breathing but loses the deliberate pacing sounds wrong in a different way. The most successful Darth Vader AI voice models treat the voice as a system rather than just a tone.

Where to Hear and Use the Darth Vader AI Voice

Multiple platforms have hosted Darth Vader voice models, though availability changes frequently as copyright enforcement evolves. ElevenLabs, one of the leading AI voice platforms, has featured community-created Star Wars voice packs at various points. Voicemod, Uberduck, and FakeYou have also hosted Darth Vader models that allow users to type text and generate audio.

For creative projects, content creators have used the Darth Vader AI voice for YouTube voiceovers, podcast intros, gaming content, meme videos, and novelty audio messages. The voice works particularly well for authoritative monologue delivery, unsurprisingly, given that Vader himself was essentially a walking monologue machine.

One practical tip: if you’re generating Darth Vader AI voice content for a creative project, input text that matches his natural speech patterns. Short declarative sentences. Dramatic pauses are indicated by punctuation. Minimal contractions. The AI model will produce far more convincing output if the text itself sounds like something Vader would actually say.

Darth Vader vs Jarvis AI Voice: A Side-by-Side Comparison

Two of the most searched AI character voices sit at opposite ends of the tonal spectrum, and comparing them reveals a lot about how AI voice technology handles character.

Darth Vader’s voice is built on bass, authority, and menace. The acoustic profile is wide, resonant, and slow. An AI reproducing this voice must handle the low-frequency range accurately, or the output sounds hollow.

Jarvis’s voice, based on Paul Bettany’s performance in the Iron Man films, is an entirely different challenge. Jarvis is higher-pitched, clipped, precisely enunciated, and carries a dry British wit that’s almost as important as the voice itself. Whereas Darth Vader’s power comes from weight, Jarvis’s appeal comes from precision and personality.

AI voice models like Jarvis have gained significant traction, particularly in the tech and smart-home communities, where people use them to build custom voice assistant interfaces. Setting up a Home Assistant or Alexa skill with a Jarvis voice layer has become a popular project for enthusiasts. The Jarvis AI voice is also widely used in gaming content on YouTube, where its association with Iron Man adds instant visual storytelling.

Both voices are achievable with current AI technology, but they expose a fundamental challenge: great character voice AI isn’t just voice synthesis. It’s personality synthesis. The best implementations of both voices understand that the character is bigger than the acoustic profile.

Iconic Cartoon and Animation AI Voices

Animated characters present a unique challenge and opportunity for AI voice technology. Unlike real human voices, animated voices are often already stylized, exaggerated, and acoustically distinct. That distinctiveness makes them easier to identify and, paradoxically, sometimes harder to perfectly replicate because any deviation from the exaggerated original is immediately noticeable.

SpongeBob AI Voice Generator

SpongeBob SquarePants may be the single most popular animated AI voice on the internet. Tom Kenny’s performance, a high, nasal, breathlessly enthusiastic delivery with a distinctive laugh, has been the subject of countless AI experiments, memes, and creative projects.

Multiple SpongeBob AI voice generators exist online, and they vary considerably in quality. The best ones capture not just the pitch range (SpongeBob’s voice sits unusually high, with frequent upward inflection at the end of phrases) but also the breathiness and the slight adenoidal quality that makes it immediately recognizable.

To use a SpongeBob AI voice generator effectively, write your text in the cadence of the character. SpongeBob speaks in bursts of enthusiasm followed by sudden deflation. He uses exclamation points more than periods. He elongates certain vowels. When you input text that matches these patterns, even a mid-tier AI model will produce results that feel distinctly SpongeBob-like.

Common uses include meme audio, YouTube videos, gaming clips (SpongeBob AI voice commentary on competitive gaming has become its own mini-genre), and novelty social media content. The character’s universally recognizable voice makes it one of the highest-engagement AI voice choices for content creators.

Squidward, Plankton, and the Full SpongeBob Cast in AI

The SpongeBob AI voice ecosystem doesn’t stop at the title character. Squidward’s deep, nasal, world-weary monotone, performed by Rodger Bumpass, has become a meme staple in its own right. Plankton’s tiny, scheming, high-pitched delivery (Mr. Lawrence) offers a comedic contrast. Together, they represent a rich palette of character voices that AI enthusiasts have worked to recreate.

The Squidward AI voice is particularly popular for deadpan commentary; his inherent tone of exhausted disdain translates well to reaction videos and other forms of commentary. Several TikTok and YouTube creators have built substantial followings by using AI Squidward to narrate mundane situations, perfectly capturing the character’s essential comedic energy.

Plankton’s AI voice is technically more challenging because the character’s voice sits at an unusual intersection of high pitch and aggressive articulation. Models that simply pitch-shift a standard voice upward miss the nasal quality and the specific rhythmic pattern of Mr. Lawrence’s performance.

Mickey Mouse AI Voice — Disney Characters in TTS

Mickey Mouse represents one of the most legally sensitive AI voice territories, which makes the search volume all the more striking. Disney is famously aggressive in protecting its IP, and Mickey’s voice, a falsetto that Walt Disney himself originated and that has been maintained with remarkable consistency ever since, is among the company’s most valuable assets.

Despite this, AI voice communities have produced Mickey Mouse voice models that have circulated online. From a purely technical standpoint, Mickey’s voice is a fascinating challenge: it’s a highly processed falsetto with a specific vibrato pattern and a characteristic breathiness on certain consonants that’s hard to isolate and reproduce.

The legal reality is that using AI-generated Mickey Mouse voice content for any commercial purpose is legally precarious territory. For pure novelty or educational purposes, AI Mickey voice tools exist, but users should be aware of the IP landscape before publishing anything that features the character’s voice or likeness.

Smurfette, Santa, and Seasonal Character Voices

Not all AI character voices carry the same cultural weight year-round. Smurfette’s AI voice has a light, slightly breathy, playfully high register, spikes in search interest in waves tied to media releases and nostalgia cycles. The Smurfs occupy a specific corner of animated nostalgia, and their voices (cartoonishly Belgian-accented, childlike) are technically interesting because the AI must handle not just pitch but accent and rhythm simultaneously.

Santa Claus represents an entirely different phenomenon. Unlike most character voices, Santa doesn’t have one canonical voice; he’s been played by countless actors, each with their own interpretation. The Santa AI voice search reflects a desire for a certain archetypal quality: deep, warm, jolly, with a specific pattern of “Ho ho ho” interjection. AI platforms have built Santa voice models for commercial applications, holiday marketing, seasonal customer service interfaces, and children’s apps, making this one of the few character voices with a clear commercial use case beyond novelty.

Mario AI Voice — Gaming Characters Brought to Life

Mario’s voice has an unusual history. Charles Martinet voiced the character for decades, delivering a distinctive, high-pitched, Italian-accented, enthusiastic style that became one of gaming’s most recognizable sounds. When Martinet stepped down in 2023, it raised immediate questions about the future of the voice and intensified interest in AI voice preservation projects.

The Mario AI voice community has been active in creating models based on Martinet’s extensive catalog of recordings. The challenge is considerable: Mario’s voice is so specific, so cartoonishly precise, that the margin for error is tiny. A slightly wrong vowel or a missed accent pitch, and it immediately sounds like a generic Italian approximation rather than Mario.

Beyond pure novelty, the Mario AI voice has found an interesting niche in speedrunning and gaming content communities, where creators use it to add character commentary to gameplay videos. The voice’s inherent cheerfulness makes it well-suited to positive or comedic framing, though it obviously lacks the range for anything dramatically serious.

Anime and Video Game Character AI Voices

Anime and gaming communities have been among the most enthusiastic early adopters of AI voice technology, for a practical reason: they have an enormous appetite for fan-created content, and AI voice tools dramatically lower the barrier to producing audio featuring beloved characters.

Gojo AI Voice (Jujutsu Kaisen)

Satoru Gojo has become one of anime’s defining characters of the 2020s, and his voice, both in the original Japanese (Yuichi Nakamura) and the English dub (Kaiji Tang), carries a signature cockiness, casual authority, and occasional playful mockery that fans find deeply appealing.

The Gojo AI voice searches reflect this fandom energy. The English dub voice has been more widely replicated in AI models accessible to Western audiences, while Japanese-language AI voice communities have worked with Nakamura’s performance. What makes Gojo’s voice distinctive from an AI standpoint is the tonal range he moves through, from casual banter to intense declaration within a single scene, requiring a model that can handle emotional register shifts, not just a consistent tone.

For fan content creators, a convincing Gojo AI voice opens up possibilities for fan dubs of manga chapters, audio drama interpretations of non-animated story arcs, and the vast genre of fan-imagined conversations between characters from different series.

Goku AI Voice — Dragon Ball Z Voices in TTS

If Gojo represents the current generation of anime fandom, Goku is its bedrock. Sean Schemmel’s English dub voice for Goku, particularly the iconic screaming-through-transformations delivery, has been a meme foundation for decades. Masako Nozawa’s original Japanese performance is, if anything, even more technically demanding to replicate, featuring a specific pitch and breathiness that Nozawa has maintained since the character’s introduction in 1984.

The Goku AI voice searches skew toward informational content; people want to hear what an AI version sounds like, typically to experience the novelty or for fan content. The transformation scream is a popular test case: it represents extreme vocal performance that pushes AI voice models to their limits.

AI Goku voice generators have been used for everything from fan-made audio fights to gaming commentary to meme audio, and the character’s universal recognition across generations makes the content immediately legible to a vast audience.

Hatsune Miku AI Voice and Singing Models

Hatsune Miku occupies a fascinating position in this conversation because she has always been a synthetic voice; she has never been a real human being. Created by Crypton Future Media in 2007 using Yamaha’s Vocaloid engine, Miku’s voice was synthesized from the beginning, making her an early ancestor of modern AI voice technology.

The search for Hatsune Miku AI voice and Miku AI voice reflects something interesting: people want to use modern AI voice tools to recreate or expand a voice that was itself artificial. This creates a recursive quality, AI modeling an AI voice that the Vocaloid community has embraced rather than found problematic.

Modern AI implementations of Miku’s voice tend to be more flexible than the original Vocaloid software, allowing for more natural phrasing and emotion. Several tools have allowed creators to generate Miku-style vocals for entirely new compositions, which have been embraced by the doujin music community as a creative tool rather than seen as a threat to the original character.

The Hatsune Miku AI voice is one of the clearer examples of AI voice technology serving a genuinely creative rather than purely novelty purpose.

Mortal Kombat Announcer AI Voice

Few voices in gaming carry the visceral punch of the Mortal Kombat announcer. Ed Boon and the Mortal Kombat team developed a specific, booming, authoritative, slightly echo-processed announcer delivery that has become one of gaming’s most iconic audio signatures.

“FINISH HIM!” is four syllables. In the context of Mortal Kombat, those four syllables carry more weight than many full speeches. The AI voice searches for the Mortal Kombat announcer reflects a desire to harness that audio power for other contexts, such as gaming commentary, party games, content creation, and novelty alerts.

From a technical standpoint, the announcer’s voice is heavily processed with reverb, compression, and a specific EQ curve, which are as much a part of the identity as the base voice. AI models that replicate only the vocal performance without the processing signature miss much of what makes the voice recognizable.

Hazbin Hotel Adam TTS Voice Explained

Hazbin Hotel’s Angel character lineup has generated substantial fandom, and Alex Brightman’s voice as Adam in the Amazon Prime Video series has developed a dedicated fanbase. Adam’s voice combines theatrical belting with a peculiar mix of arrogance and absurdity, a blend Brightman executes with remarkable commitment.

The AI voice interest around Hazbin Hotel characters reflects the show’s intensely passionate fanbase and its multimedia approach to storytelling. Audio fan content, including AI voice recreations of characters in scenarios not covered by the show, is a natural extension of that fandom energy.

The Adam AI TTS voice is technically challenging because the character’s delivery is so performative and stylized. He essentially speaks and sings in a way that’s designed to be larger-than-life, which means AI models have to handle significant dynamic range and deliberate melodrama.

Celebrity and Rapper AI Voices

If fictional character AI voices are fascinating, celebrity voice clones are genuinely controversial, and that controversy is part of what makes them so sought after. The ethical and legal questions surrounding the replication of a living person’s voice are real and unresolved, making these voices compelling both as technological demonstrations and as cultural lightning rods.

Kanye West AI Voice

Kanye West’s voice has been one of the most replicated in the AI voice community, for reasons that go beyond pure celebrity fame. Ye’s specific delivery of the cadence, the ad-lib patterns, the way he drops to a near-whisper before explosive emphasis is distinctive enough to be immediately recognizable but also well-documented enough across decades of recorded material to train models on.

Community-built Kanye AI voice models have used both his rapping delivery and his speaking voice as source material, producing models that function differently for different purposes. The rapping voice model captures his flow, breath patterns, and the specific way he attacks consonants. The speaking voice model is closer to what you’d hear in interviews, thoughtful, sometimes stream-of-consciousness, with the characteristic long pauses before statements he considers significant.

The Kanye AI voice has been used extensively for meme content, fan music productions, and novelty audio. The Kanye AI voice and Kanye West AI voice searches are closely related and likely capture the same user intent, though users with slightly different search habits are looking for the same tool and output.

Drake AI Voice Generator

Drake’s AI voice has a particular cultural context: it became one of the most-discussed celebrity voice clones in the music world following a 2024 incident in which a song featuring an AI voice cloned to sound like Drake went viral amid public feuding with another artist. The incident forced mainstream conversations about AI music, consent, and intellectual property that had previously been confined to tech circles.

Regardless of the controversy, the Drake AI voice generator searches reflect a genuine interest in the technology. Drake’s voice, warm and melodic, with specific Toronto inflections and a signature blend of rapping and singing, is one of the more technically challenging to replicate faithfully, because so much of his artistic identity lies in the tonal quality that sits between spoken word and melody.

AI-generated Drake content varies widely in quality. The most convincing models capture the signature “singing rap” hybrid delivery, but many fall flat on the subtle melodic qualities that distinguish his voice from other rap voices.

Juice WRLD AI Voice

Juice WRLD Jarad Higgins passed away in December 2019 at the age of 21. The “Juice WRLD AI voice” searches carry a different emotional weight than most other entries in this list because they reflect, at least in part, a desire to hear more from an artist taken too early.

This is one of the more ethically nuanced applications of AI voice technology. On the one hand, fans using AI voice tools to generate music in Juice WRLD’s style is arguably no different from tribute music or from sampling as a form of artistic memorial. On the other hand, the question of whether a deceased artist’s estate should control the AI-generated replication of their voice remains unresolved in most legal systems.

From a technical standpoint, Juice WRLD’s voice is a compelling challenge for AI. His melodic, emotionally raw vocal style, with a specific way of sliding between notes, requires a model that handles pitch variation and emotional texture, not just vocal timbre. The best AI Juice WRLD models capture some of this, though the emotional authenticity of his original performances remains difficult to replicate.

Donald Trump AI Voice Generator

Donald Trump’s voice is among the most recognizable in American public life, and AI voice generators built on his speech patterns have proliferated. The searches for “Donald Trump voice ai” and “trump voice ai generator” sit at 390 monthly searches combined, reflecting consistent public interest.

Trump’s voice has specific characteristics that make it relatively accessible for AI replication: a distinctive New York accent, a repetitive rhetorical structure, frequent emphasis patterns, and a vocal quality that has remained consistent across decades of public appearances. The sheer volume of source material, speeches, interviews, debates, and debates within debates gives AI models extensive training data.

The use cases for Trump AI voice content span satire, political commentary, and news media demonstrations of deepfake technology’s risks. This is one of the few character voice types in which the informational and political commentary intents intersect with genuine public policy concerns about misinformation.

It is worth noting that using AI-generated voices of real political figures to spread false statements or fabricated positions is illegal in several jurisdictions and deeply problematic regardless of legality. The AI Trump voice searches appear to be largely oriented toward novelty and satire rather than deception, but the technology’s potential for misuse is real and documented.

LeBron James and Ice Spice AI Voices

LeBron James and Ice Spice represent an interesting pair in this dataset, one a sports legend with a global profile, the other a music artist who reached peak cultural saturation in 2023–2024. Both searches have a monthly volume of 320 and low competition, suggesting they’re driven by specific communities rather than broad casual interest.

LeBron’s speaking voice is warm, measured, and distinctively deep. He’s been a media presence since his teens, providing extensive training material. The searches suggest curiosity-driven use cases: fans wanting to hear AI LeBron commentary on basketball or respond to scenarios.

Ice Spice’s voice presents a different challenge; her specific Bronx inflection and the way she delivers her cadence are the source of its appeal. AI voice models of her speaking voice have circulated online, largely as novelty content tied to her rapid rise in cultural prominence.

Specialty and Creative AI Voice Types

Not every AI voice search is about a specific person or character. Some reflect a desire for a particular archetype, style, or comedic register that the AI can inhabit.

AI Pirate Voice Generator

The pirate voice is one of the most enduring audio archetypes in Western culture “Arr, matey” is understood instantly across language backgrounds, age groups, and cultural contexts. AI pirate voice generator searches are largely utility-driven: people want a tool they can use for games, videos, Halloween content, or novelty projects.

Free AI pirate voice generators typically work by applying a combination of accent modifications (toward a theatrical West Country English approximation, the “pirate accent” is actually based on historical West Country British speech patterns), pitch adjustments, and roughness filters. The result is less a specific person’s voice than a stylized archetype.

The best free options include ElevenLabs’ character voice packs (which have historically included pirate-style voices), Voicemod’s character filters for real-time use, and several browser-based TTS tools with preset voice characters. Quality varies significantly in the free tier, but for most novelty or creative purposes, even a mid-quality pirate voice AI generates the intended effect.

Roast AI Voice

Roast AI voice is a slightly different search pattern; it’s not looking for a specific character’s voice, but for an AI that can perform comedy roasts in a specific voice style. This search, with a monthly volume of 390, likely captures users looking for tools that combine voice generation with comedic writing capabilities.

The roast format has a specific verbal register: direct address, escalating insults, self-deprecating pivots, and a cadence that builds to punchlines. Several AI platforms have experimented with roast-specific modes that combine language-model humor with voice synthesis, allowing users to input a target (themselves, a friend, or a celebrity) and receive an audio roast.

The voice component matters enormously in roast delivery; in comedy, a robotically delivered insult lands very differently from one with proper comedic timing. The best roast AI voice implementations use models trained or prompted specifically for comedic delivery, with pauses before punchlines and a slight shift in tone that signals the joke is landing.

SZA and Singer AI Voice Generators

Free AI SZA singing voice generator reflects the growing category of AI singing-voice tools, distinct from speaking-voice clones in important technical ways. Singing voice AI requires the model to handle pitch accuracy across musical scales, vibrato control, breath timing synced to musical phrasing, and the specific tonal quality a singer uses in performance versus speech.

SZA’s voice has specific characteristics that fans find appealing in an AI context: the distinctive upper register, the emotional rawness of her delivery, and the way she moves between whisper and full voice. Several AI music tools have trained their models on her catalog, though these are almost universally unofficial and legally questionable due to copyright considerations.

The free qualifier in the search is significant, as it suggests users are aware that premium AI singing-voice tools exist but are specifically seeking no-cost options. The free tier of most AI singing platforms offers lower-quality output, limited usage, and often watermarked audio, but for experimentation and personal use, they’re functional.

How to Use AI Voice Models

Understanding what AI voice models exist is one thing. Knowing how to actually use them and get good results is another.

Free vs Paid AI Voice Platforms Compared

The AI voice platform landscape divides roughly into three tiers:

Free Tools

Free tools include browser-based TTS platforms such as FakeYou and Voicechanger.io, as well as several Discord bots that generate character voices. These tools are accessible, require no account for basic use, and feature community-contributed voice models covering a wide range of characters and celebrities. Quality is inconsistent; the best models on free platforms are impressive, but the worst sound like clear AI artifacts.

Freemium Platforms

Freemium platforms like ElevenLabs, Play.ht, and Resemble.ai offer limited free monthly credits with significant quality improvements over fully free tools. ElevenLabs, in particular, has raised the bar substantially for voice quality, and its community voice library includes many character and celebrity voice models created by users. The free tier typically allows 10,000 characters of text generation per month, enough for substantial experimentation.

Premium Platforms

Premium platforms, the paid tiers of the same tools, offer higher quality, more characters generated per month, commercial licensing options, and often API access for developers integrating AI voice into applications. For serious content creation, the paid tiers are usually worth the investment.

For most people exploring AI character voices for the first time, starting with ElevenLabs’ free tier or FakeYou’s community platform gives the best combination of quality and accessibility.

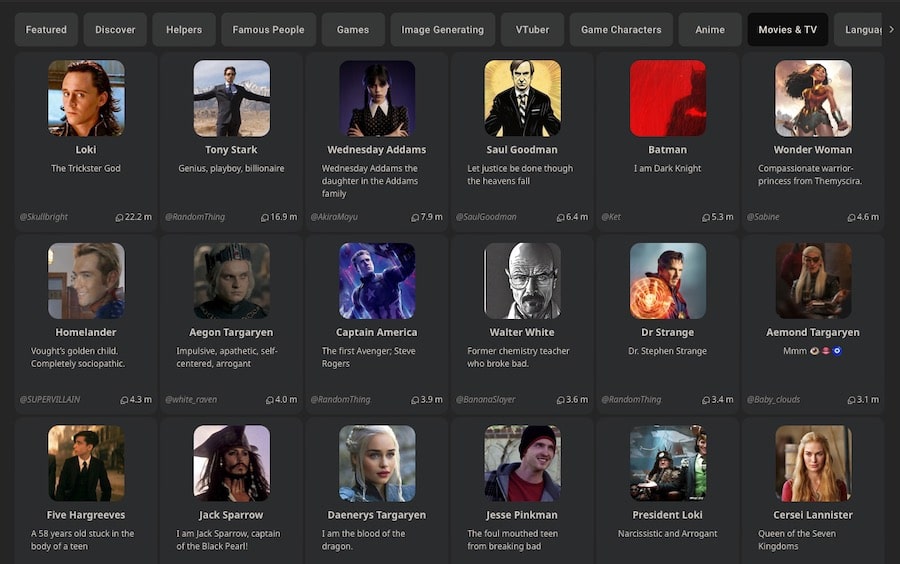

Best Tools for Generating Character AI Voices

ElevenLabs

ElevenLabs remains the overall leader for voice quality. Its community voice library hosts many character voices, and its Instant Voice Cloning feature (available on paid plans) lets users create new models from short audio samples. The voice engine handles emotional range and naturalness better than most competitors.

FakeYou

FakeYou is the most character-voice-specific platform, with an extensive library of community-contributed models specifically for fictional characters. If you’re looking for a specific cartoon, game, or anime voice, FakeYou’s library is often the best starting point.

Uberduck

Uberduck has positioned itself toward music production, making it particularly relevant for rapper and singer voice clones. Its catalog and interface are oriented toward musical applications rather than speech generation.

Voicemod

Voicemod is the dominant real-time voice changer, relevant if you want to use a character voice in live contexts like gaming sessions or streaming, though, as noted, this is a different use case from the discovery and generation focus of most character voice searches.

Tips for the Most Realistic AI Voice Output

Getting good results from an AI voice model is partly about choosing the right platform and partly about how you structure your input text. Several principles apply across most tools:

Write in the character’s natural cadence. Darth Vader speaks in short, declarative statements. SpongeBob speaks in run-on enthusiasm. The Mortal Kombat announcer uses all caps for a reason. The more your input text matches the natural speech patterns of the voice you’re trying to generate, the more natural the output.

Use punctuation deliberately. Commas create brief pauses. Periods create longer ones. Ellipses create the longest. For dramatic character voices, strategic punctuation can dramatically improve the output.

Match sentence length to character. Long, complex sentences tend to expose weaknesses in AI voice models. If you’re generating Darth Vader voice content, I am your father will sound better than I want you to understand that, despite everything that has transpired between us, there is an undeniable biological reality that I feel compelled to share with you at this juncture. Simple and direct plays to the AI’s strengths.

Test in short segments before generating long pieces. AI voice quality can vary significantly based on specific words and phoneme combinations. Test your intended script in 30–50 word chunks before committing to a long generation.

Legal and Ethical Considerations of AI Voice Cloning

This guide would be incomplete without addressing the genuine legal and ethical complexities surrounding AI voice technology. These aren’t abstract philosophical concerns; they have real implications for anyone who uses or creates AI voice content.

Is It Legal to Clone a Celebrity’s Voice with AI?

The honest answer is: it depends, and the law is still catching up with the technology.

In the United States, several legal frameworks are potentially relevant. The right of publicity, which protects a person’s right to control commercial use of their name, likeness, and voice, is recognized in most states, though the scope varies. California’s right of publicity law, covering the entertainment industry’s home base, is among the strongest in the country and explicitly extends protections beyond death.

The EU’s approach leans more toward privacy rights under GDPR, which provides different but potentially stronger protections for the use of a living person’s biometric data (which a voice print arguably constitutes).

Several jurisdictions have begun passing AI-specific legislation. Tennessee’s ELVIS Act (2024) was specifically designed to protect recording artists from unauthorized AI voice cloning named after Elvis Presley. It represents the first state-level law directly targeting this issue. Other states have followed or are following.

For practical purposes, non-commercial, clearly satirical, or purely personal use of celebrity AI voices falls into legally grey territory that is rarely prosecuted. Commercial use, selling content featuring an AI celebrity voice, using it in advertising, or distributing it at scale is significantly riskier and in some cases clearly illegal.

Copyright, Consent, and Deepfake Voice Laws

The copyright law protects the specific recorded performances of artists, but not necessarily the general sound of a voice. This is why voice actors have sound-alikes. It’s legal to hire someone to sound like a celebrity for commercial purposes (within limits), but not to sample their actual recording without permission.

AI voice cloning sits in an uncomfortable middle ground. The output isn’t a copy of an original recording; it’s a new generation from a model trained on that recording. Courts have not reached consistent conclusions about whether this constitutes copyright infringement of the source material.

Consent is the clearest ethical principle. The Darth Vader AI voice example, in which James Earl Jones explicitly approved the use of his voice recordings for AI generation, represents an emerging best practice. Several major artists and studios have begun developing AI voice licensing frameworks that would allow approved use while protecting the underlying performance.

Deepfake voice laws specifically are emerging at both the federal and state levels. The DEFIANCE Act (2024) at the federal level addressed deepfakes involving non-consensual intimate imagery, with provisions that, in some interpretations, extend to audio deepfakes. More specific AI voice legislation is actively being worked through legislatures at multiple levels.

The ethical through-line, regardless of legal specifics: AI voice content that deceives audiences about what a real person said or believes is harmful. Satire, novelty, and fan creativity that is clearly labeled as AI-generated occupy very different moral territory from realistic-sounding fabrications designed to mislead.

Frequently Asked Questions (FAQs)

What is the best AI voice for Darth Vader?

ElevenLabs’ community voice library has hosted several well-regarded Darth Vader voice models. FakeYou also has community-contributed Vader models that vary in quality. Checking user ratings and listening to sample outputs before committing to a generation is recommended. For the absolute best quality, paid tiers on ElevenLabs give you access to higher-quality synthesis even when using community voice models.

Can AI replicate any voice perfectly?

Not yet, but it’s closer than most people expect. Current AI voice technology excels at capturing the broad tonal and cadence characteristics of a voice, but struggles with the finest nuances: the specific way someone’s voice changes under genuine emotion, the micro-variations that distinguish authentic performance from synthesis. For practical purposes, novelty content, fan projects, and demonstrations of today’s AI voice tools are remarkably convincing. For uses where a trained listener is looking critically, the synthetic quality is usually detectable.

Are these AI voice generators free to use?

Many are, with limitations. FakeYou offers free access to its community voice library. ElevenLabs provides a free tier with monthly character limits. Several other platforms offer limited free generation. The best quality tools typically require paid subscriptions for serious use, but the free tiers are more than sufficient for experimentation and personal projects.

Which AI voices are trending right now?

Search data points to several consistent high performers: Darth Vader, SpongeBob and the SpongeBob cast, Kanye West, Drake, Goku, and the Mortal Kombat announcer have all maintained strong search interest over extended periods. Seasonal voices like Santa Claus spike predictably. Newer character voices tend to spike around media releases when a new anime season drops or a game launches; interest in those characters’ AI voices typically follows within weeks.

What are the most realistic AI voice models available?

ElevenLabs’ highest-tier voice models consistently rank among the most realistic available to consumers. Microsoft’s Azure Neural Voice and Google’s WaveNet are technically impressive but are primarily developer-facing APIs rather than consumer tools. For character voices specifically, the quality ceiling is determined both by the platform’s voice engine and the quality of the community-contributed voice model. A well-trained model on a mid-tier platform can outperform a poorly trained model on a top-tier platform.

Is it ethical to make content with a deceased person’s AI voice?

This is one of the most discussed questions in AI ethics. The considerations include whether the deceased expressed preferences regarding the posthumous use of their voice, whether their estate takes a position on the matter, and whether the content is clearly labeled as AI-generated or presented as authentic. Tribute content that is clearly identified as AI-generated occupies a very different ethical territory from content designed to appear as genuine recordings. Artists and creators who died young, like Juice WRLD, generate the most emotionally complex discussions, where fan tribute intent collides with questions about exploitation and consent.