Everywhere you look, companies are pouring billions into artificial intelligence. New tools, new models, new platforms, the technology side of AI has never been more advanced or more accessible. And yet, despite all this investment, a striking number of AI transformation initiatives quietly fall apart.

Everywhere you look, companies are pouring billions into artificial intelligence. New tools, new models, new platforms, the technology side of AI has never been more advanced or more accessible. And yet, despite all this investment, a striking number of AI transformation initiatives quietly fall apart.

They miss their targets. They get shelved after pilot projects. They create more confusion than clarity. And when leaders try to diagnose what went wrong, the instinct is almost always the same: blame the technology. Wrong model. Wrong data. Wrong vendor.

But here is the uncomfortable truth that researchers, executives, and AI practitioners are increasingly pointing to: AI transformation is not failing because the technology is broken. It is failing because the governance is missing.

This argument has gained significant traction in academic circles, boardrooms, and on social platforms like Twitter, where thought leaders in AI, policy, and organizational design have pushed back hard against the idea that AI is purely a technical challenge. The consensus is shifting. And if you are leading or advising any kind of AI initiative, this shift should change how you think about everything.

This guide breaks down exactly what that means, why governance gaps are the real enemy of successful AI transformation, and what you can do about it.

What Does AI Transformation Is a Problem of Governance Mean?

Breaking Down the Core Argument

At its simplest, the argument is this: the reason most organizations struggle with AI transformation is not that they chose the wrong algorithm or lacked enough computing power. It is because they have no clear structure for how AI decisions get made, who is accountable when things go wrong, and what rules guide AI behavior inside the organization.

Governance, in this context, refers to the policies, processes, roles, and accountability structures that govern how AI is developed, deployed, monitored, and corrected within an organization.

When governance is weak or absent, even the most technically sophisticated AI system will fail to deliver lasting value. It will produce outputs that nobody trusts. It will create legal exposure that nobody anticipated. It will amplify existing biases that nobody checked. And it will drift away from the organization’s actual goals because nobody is steering it.

The technology did not fail. The human structure around the technology failed.

Why This Statement Went Viral on Twitter and Social Media

The phrase AI transformation is a problem of governance that gained significant online traction because it ran counter to a very popular and well-funded narrative: that AI is primarily a technical puzzle and that, if you just get the engineering right, the transformation will follow.

Practitioners, consultants, and researchers who had spent years watching well-resourced AI projects collapse from the inside pushed back. They pointed to organizations with world-class data science teams still struggling to get AI into production at scale. They pointed to models that performed perfectly in testing but caused reputational damage in deployment. They pointed to AI strategies that were technically coherent but organizationally incoherent.

The argument resonated because it aligned with the lived experience of people working in AI transformation programs. Technology was rarely the wall. Structure, ownership, policy, and culture governance in their broadest sense were almost always the wall.

Technology vs. Governance: What’s the Real Bottleneck?

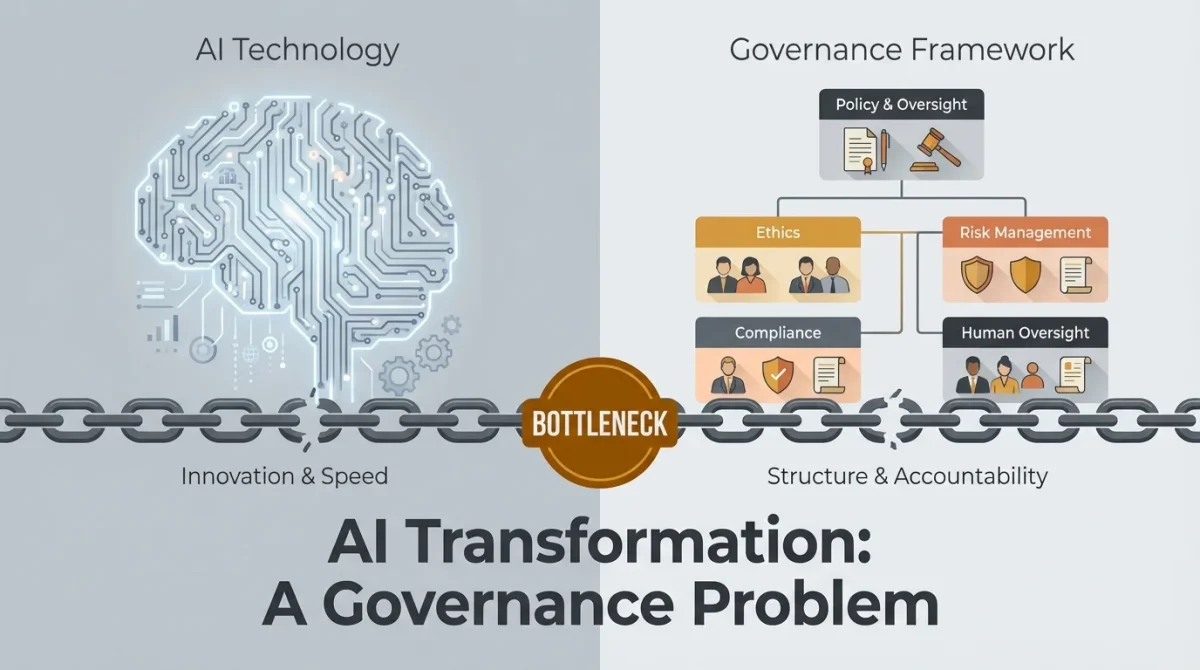

Think of it this way. Technology is the engine. Governance is the steering wheel, the brakes, and the driver’s license combined.

You can have the most powerful engine ever built, but if you have no steering, no way to stop, and nobody qualified to drive, you are not going anywhere useful. You are just creating a very expensive hazard.

The same logic applies to AI. Organizations that treat AI transformation as purely a technology procurement problem consistently underinvest in the governance infrastructure that makes that technology safe, effective, and scalable. They buy the engine. They forget everything else.

Why Most AI Transformations Fail

The Myth That AI Failure Is a Technical Problem

There is a long history of high-profile AI failures being framed as technical problems when they were fundamentally governance problems.

Amazon’s AI recruiting tool, which was scrapped in 2018 after it was found to systematically disadvantage women, was technically sophisticated. The failure was not in the algorithm’s ability to process data. The failure was in the absence of oversight structures that would have caught and corrected the bias before the tool was deployed at scale.

The same pattern appears repeatedly across industries. AI systems that produce discriminatory outputs, make unsafe healthcare recommendations, or generate misleading content are almost never failing because someone wrote bad code. They are failing because nobody built the checks, balances, and accountability mechanisms around the code.

Framing these failures as technical problems leads to technical solutions that retrain the model, switch vendors, or add more data, which never address the root cause. The root cause is almost always governance.

Poor Decision-Making Structures Around AI Deployment

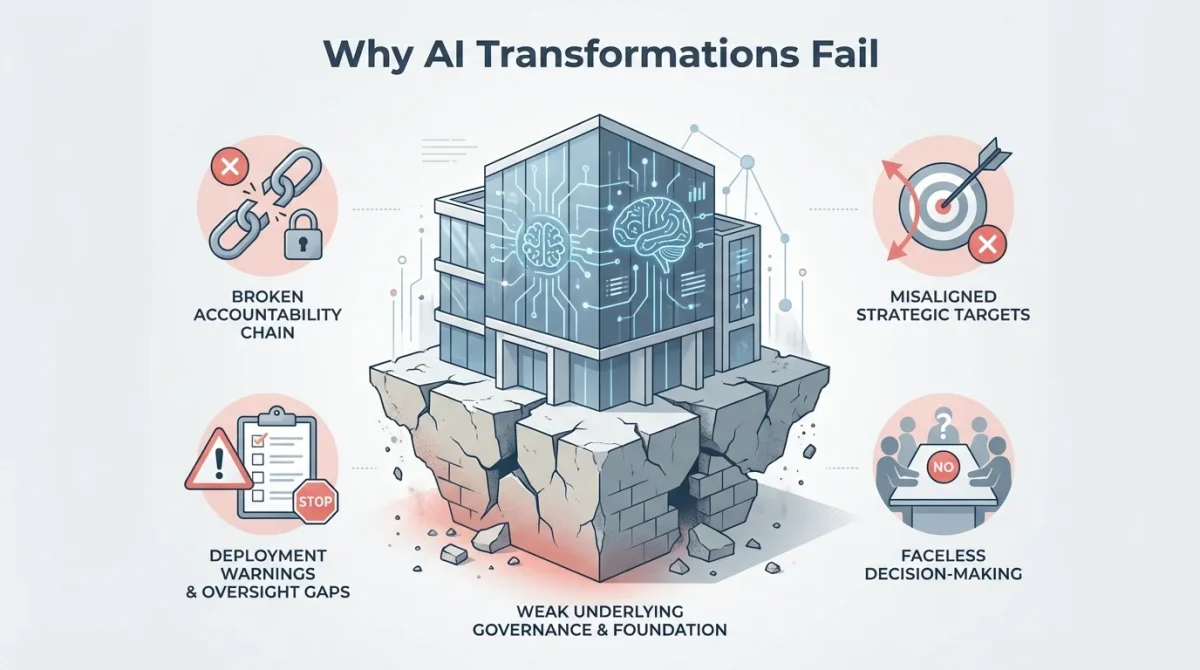

One of the clearest signs of a governance deficit is the absence of a defined decision-making structure for AI deployment. In organizations without strong governance, AI chatbot projects tend to advance based on enthusiasm and internal politics rather than rigorous evaluation criteria.

Who decides whether a model is ready for production? Who reviews its outputs for bias or legal risk? Who has the authority to pause or roll back a deployment if problems emerge? In governance-weak organizations, these questions either have no answer or have too many competing answers.

The result is that AI systems get deployed before they are ready, stay deployed longer than they should, and cause damage that proper decision-making structures would have prevented.

Lack of Accountability in AI-Driven Organizations

Accountability is the backbone of governance and one of the most commonly missing elements in AI transformation programs.

When an AI system makes a consequential mistake, a flawed credit decision, a biased hiring recommendation, a dangerous medical suggestion, someone needs to be responsible. Not abstractly responsible in a corporate communications sense, but structurally responsible in a way that creates real incentives for oversight and correction.

In organizations without clear AI accountability structures, mistakes get diffused across teams and vendors until nobody is actually responsible for anything. This diffusion does not just create legal and reputational risk. It destroys the learning loops that would otherwise allow the organization to improve over time.

What Is AI Governance And Why Does It Matter

Definition of AI Governance

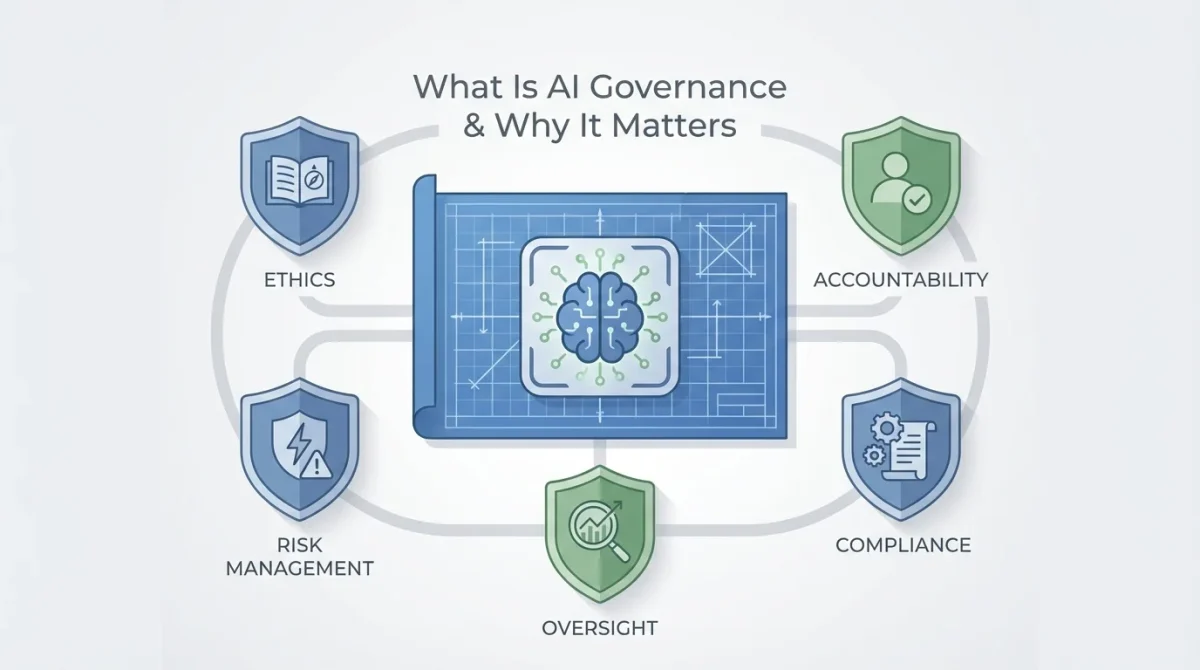

AI governance refers to the set of policies, frameworks, roles, and processes that guide how an organization develops, deploys, uses, and monitors artificial intelligence systems. It encompasses everything from data management policies and ethical guidelines to regulatory compliance mechanisms and internal audit processes.

Good AI governance does not mean slowing down AI development. It means creating the structures that make AI development sustainable, trustworthy, and aligned with the organization’s goals and values.

Key Components of a Strong AI Governance Framework

A robust AI governance framework typically includes several interconnected components.

Clear ownership and accountability mean that every AI system in production has a named owner responsible for its performance, compliance, and ethical behavior. There is no ambiguity about who is accountable.

Ethical guidelines and principles provide a values-based foundation for AI decision-making, covering issues such as fairness, transparency, privacy, and human oversight.

Risk assessment processes ensure that AI systems are evaluated for potential harms before deployment and monitored for emerging risks after deployment.

Data governance integration links AI governance to the organization’s broader data management policies, ensuring that the data feeding AI systems is accurate, representative, and appropriately sourced.

Regulatory and legal compliance mechanisms ensure that AI systems meet applicable legal requirements and industry standards, and that compliance is actively maintained rather than assumed.

Escalation and incident response protocols define what happens when an AI system produces problematic outputs, including who is notified, what actions are taken, and how the system is corrected or retired.

Who Is Responsible for AI Governance in an Organization?

This is one of the most debated questions in enterprise AI, and the honest answer is that responsibility is distributed, but it needs to be deliberately structured rather than accidentally fragmented.

At the leadership level, the Chief AI Officer, Chief Data Officer, or an equivalent executive sponsor needs to own the overall AI governance strategy and ensure it has the organizational authority to do so.

At the operational level, data scientists, engineers, and product managers need governance responsibilities embedded directly into their workflows, not treated as a separate compliance checkbox.

Legal, compliance, and risk teams need meaningful visibility into AI systems and genuine authority to flag or escalate concerns.

And in organizations serious about AI governance, there is typically an AI ethics committee or review board that provides cross-functional oversight of high-stakes AI deployments.

The critical point is that governance cannot live in any single team. It has to be woven into the organizational fabric.

The Real Governance Challenges Behind AI Transformation

Data Ownership and Access Control

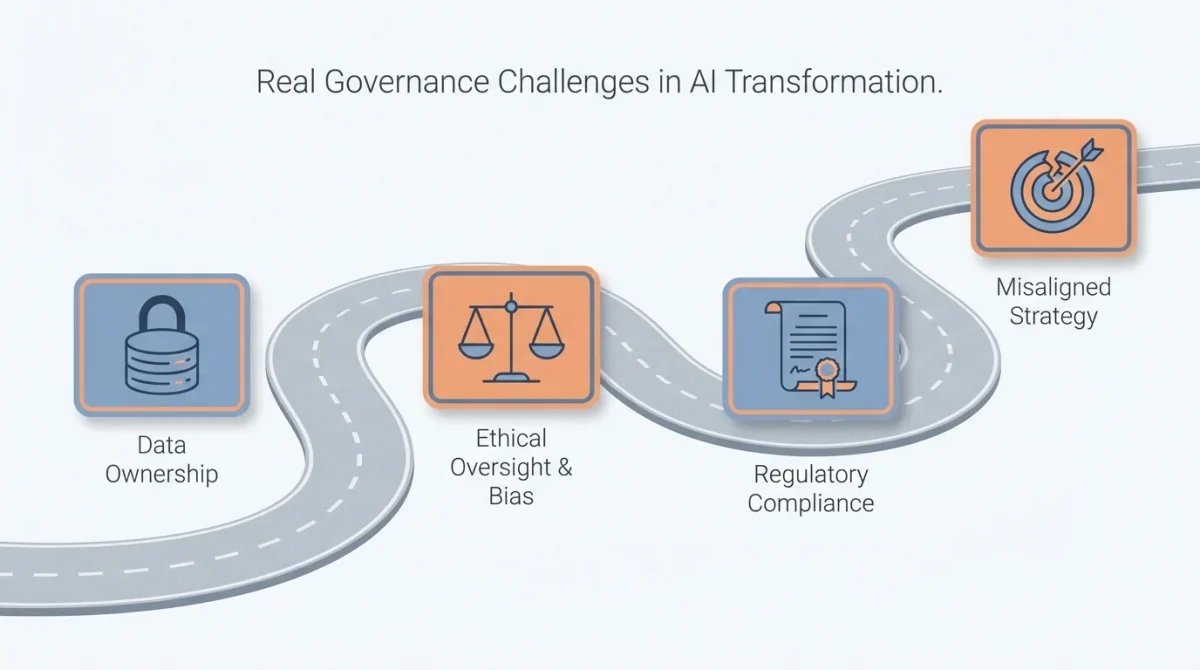

Data is the raw material of AI, and governance failures around data are among the most common causes of AI transformation breakdowns.

Who owns the data that an AI system uses? Who can access, modify, or delete it? What happens when that data contains personally identifiable information, or when it reflects historical patterns of discrimination?

Without clear data ownership and access control structures, AI systems are built on legally precarious, ethically questionable, and technically unstable foundations. The data governance problem is inseparable from the AI governance problem.

Ethical Oversight and Bias Management

AI systems inherit the biases present in the data they are trained on, and without active oversight, those biases get amplified and systematized at scale.

Ethical oversight means having structured processes for identifying, measuring, and mitigating bias in AI systems. It means involving diverse perspectives in the design and evaluation of AI tools. It means creating feedback mechanisms that enable affected communities or end users to surface problems that internal teams might miss.

This is not a technical problem that better algorithms will eventually solve on their own. It is a governance problem that requires human judgment, institutional commitment, and ongoing investment.

Regulatory Compliance and Legal Risk

The regulatory environment around AI is evolving rapidly. The European Union’s AI Act represents the most comprehensive AI regulation framework currently in force, classifying AI systems by risk level and imposing significant obligations on high-risk deployments. Similar regulatory movements are underway in the United States, the United Kingdom, Canada, and across the Asia-Pacific region.

Organizations that lack AI governance frameworks often run AI systems that are already non-compliant with existing or imminent regulations, and they do not know it because nobody is watching.

Legal risk from AI is not theoretical. It includes privacy violations, discrimination claims, consumer protection breaches, and liability for AI-generated decisions that cause harm. Governance is the mechanism that manages this risk proactively rather than reactively.

Misalignment Between AI Strategy and Business Goals

One of the subtlest and most damaging governance failures is the misalignment between what an organization’s AI systems are optimized to do and what the organization actually needs them to do.

This happens when AI projects are driven by technical teams with deep expertise but limited business context, or by business teams with clear goals but no mechanism for translating those goals into AI design requirements.

Governance creates the bridge. A structured AI governance process ensures that business objectives are clearly defined, translated into measurable AI performance criteria, and regularly reviewed against actual system behavior. Without this bridge, organizations end up with AI that is technically impressive but strategically irrelevant.

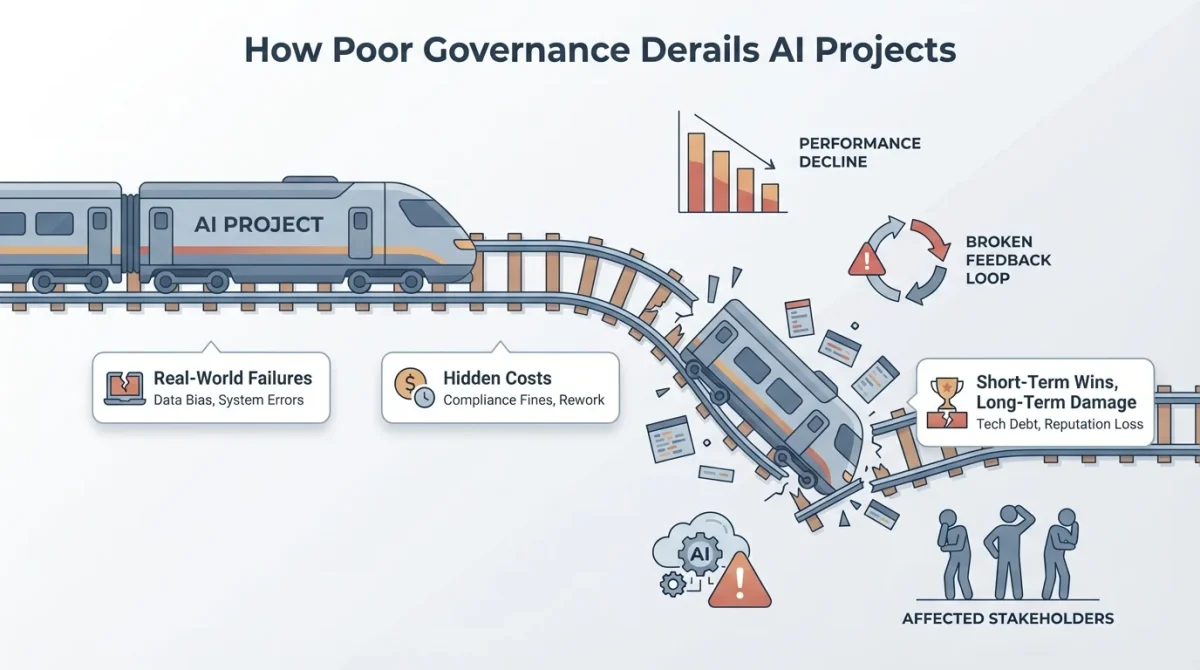

How Poor Governance Derails AI Projects

Real-World Examples of AI Failures Caused by Governance Gaps

The record of AI failures attributable to governance gaps is already substantial, and it spans every major industry.

In healthcare, AI diagnostic tools have been deployed in clinical settings without adequate oversight of their performance across different patient populations, leading to systematic disparities in care quality that took years to identify.

In financial services, automated lending and credit-scoring systems have perpetuated discriminatory patterns in ways that would have been caught immediately by proper pre-deployment bias auditing.

In criminal justice, AI risk assessment tools used to inform sentencing and parole decisions have shown demonstrable racial bias, with consequences measured not in budget overruns but in human lives and liberties.

In each case, the technology worked as designed. The governance failed to ensure the design was right.

The Hidden Cost of Ungoverned AI

The costs of poor AI governance extend well beyond the immediate consequences of specific system failures.

Reputational damage from a high-profile AI failure can take years to repair and destroy customer trust that took decades to build. Regulatory penalties from non-compliant AI deployments can run into the hundreds of millions. Internal talent consequences: engineers and data scientists who leave because they do not want to work on AI systems they cannot be proud of are rarely measured, but they are very real.

Perhaps most significantly, organizations with poor AI GRC governance tend to develop organizational cultures of distrust toward AI. When employees see AI systems producing unreliable or unfair outputs and no one is held accountable, they stop believing in the organization’s AI initiatives altogether. Getting that trust back is extremely difficult.

Short-Term Wins vs. Long-Term Organizational Damage

Poor AI governance often produces short-term wins that mask long-term damage. A company might deploy an AI system quickly, with minimal governance overhead, and achieve impressive early results. The speed feels like proof that the governance skeptics were wrong.

But the governance debt accumulates. The system develops blind spots that nobody is monitoring. Regulatory requirements change, and the system falls out of compliance. The data it was trained on was ages and performance degradation. An incident occurs, and nobody knows who is responsible or what the response protocol is.

The short-term win becomes a long-term liability. And the organization that moved fast by skipping governance ends up moving slowly for years afterward, managing the fallout.

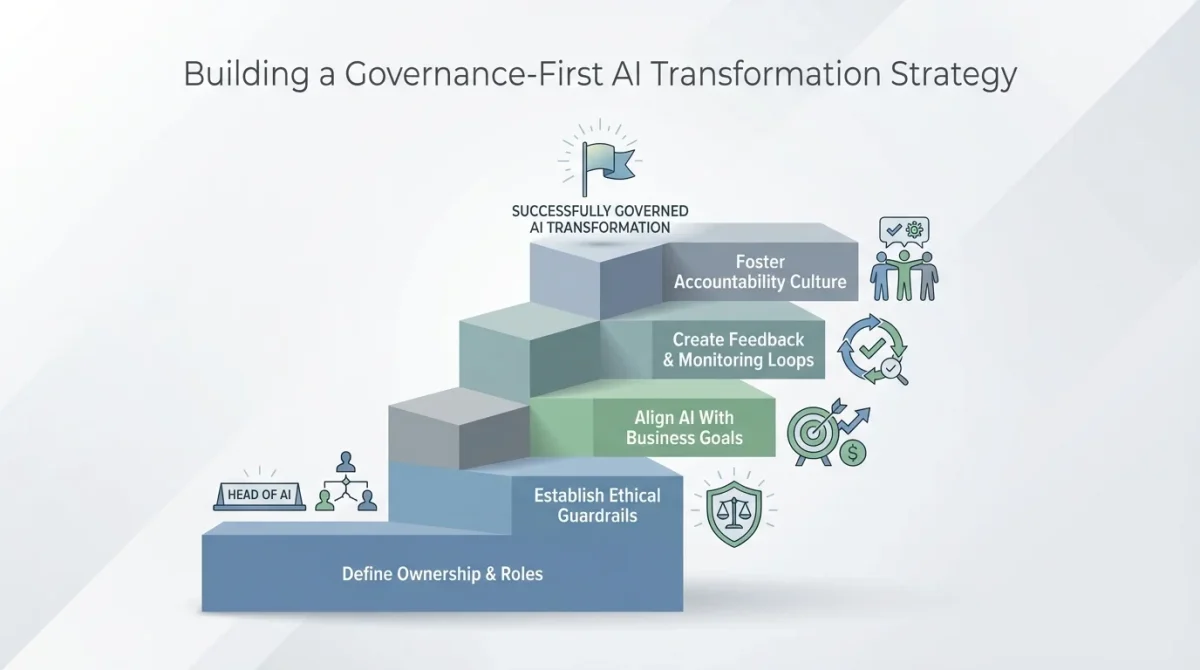

Building a Governance-First AI Transformation Strategy

Step 1: Define Clear AI Ownership and Roles

Before a single line of AI model code is written or a vendor contract is signed, establish who owns what.

Every AI initiative needs a named business owner who is accountable for its outcomes. Every AI system in production needs a named technical owner who is responsible for its performance and health. Every AI deployment needs a designated compliance reviewer who is responsible for regulatory alignment.

This is not bureaucracy for its own sake. It is the minimum structural foundation that allows accountability to function.

Step 2: Establish Ethical and Compliance Guardrails

Define your organization’s AI principles clearly and specifically. Generic commitments to “responsible AI” are not guardrails. Specific, enforceable policies about what your AI systems will and will not do, what data they will and will not use, and what outcomes they will and will not optimize for are guardrails.

Map your AI activities against applicable regulatory frameworks and establish monitoring processes to maintain compliance as regulations evolve.

Build bias assessment into your standard model development workflow, not as an afterthought but as a gate that every model must pass before deployment.

Step 3: Align AI Initiatives With Business Objectives

Every AI project should begin with a governance conversation that answers three questions: What specific business problem are we solving? How will we measure whether the AI solution is actually solving it? And who is responsible for regularly reviewing that measurement?

This alignment process prevents the common pattern of technically successful AI projects that fail to deliver business value because nobody connected the technical goals to the business goals from the start.

Step 4: Create Feedback Loops and Monitoring Systems

Governance does not end at deployment. In many ways, it intensifies after deployment.

AI systems need continuous monitoring for performance degradation, bias drift, and emerging risks. They need feedback channels that surface problems from end users, affected communities, and frontline employees who interact with AI outputs daily.

They need regular reviews, not annual audits, but ongoing governance processes that ask whether the system is still performing as intended, still aligned with business goals, and still compliant with applicable requirements.

Step 5: Foster a Culture of AI Accountability

The most sophisticated governance framework in the world will fail if the organizational culture does not support it. Culture is the invisible governance layer that determines whether formal policies are actually followed or quietly bypassed.

Building a culture of AI accountability means making governance a source of competitive pride rather than a compliance burden. It means recognizing and rewarding teams that identify and fix AI problems. It means fostering psychological safety so employees can raise AI concerns without fear of reprisal. It means leadership consistently modeling the belief that doing AI right matters more than doing AI fast.

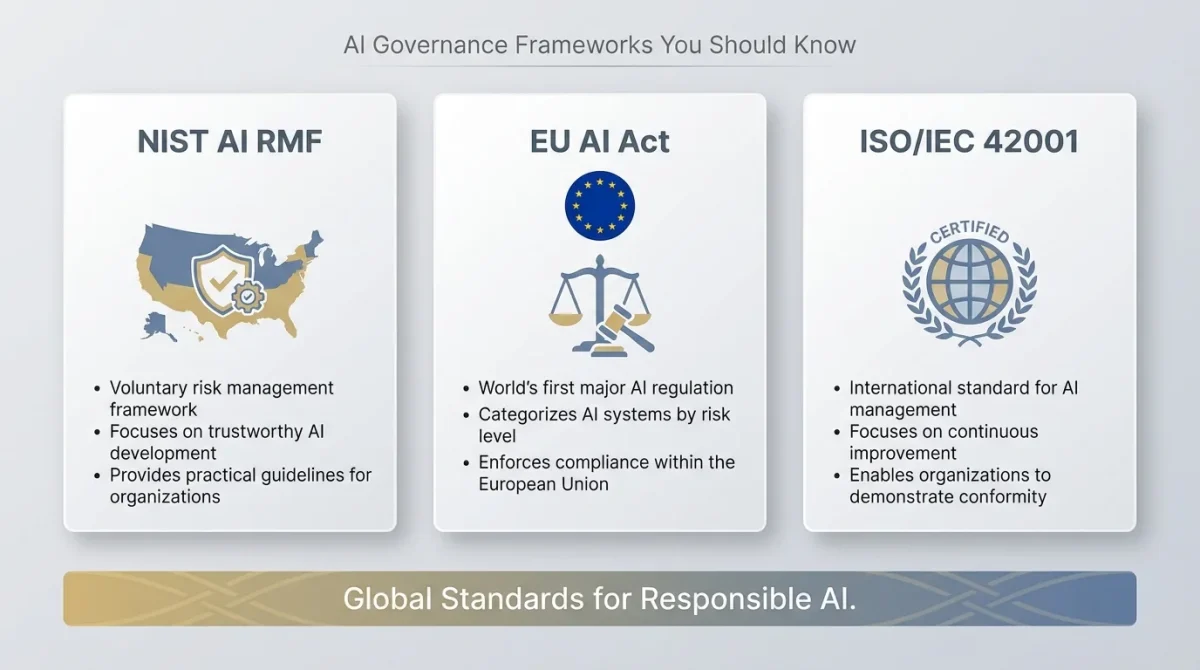

AI Governance Frameworks You Should Know

NIST AI Risk Management Framework

The National Institute of Standards and Technology in the United States published its AI Risk Management Framework to help organizations manage AI risks throughout their lifecycle. The framework organizes AI risk management around four core functions: Govern, Map, Measure, and Manage and provides practical guidance for implementing each.

It is voluntary rather than regulatory, but it has become a widely adopted baseline for organizations in the United States looking to build credible AI governance programs.

EU AI Act and Its Governance Implications

The European Union’s AI Act is the world’s first comprehensive legal framework specifically regulating AI. It classifies AI systems into risk GRC categories: unacceptable risk, high risk, limited risk, and minimal risk and imposes requirements proportional to each category.

High-risk AI applications, including those used in hiring, credit decisions, healthcare, and law enforcement, face significant requirements around transparency, data governance, human oversight, and conformity assessment before deployment.

For organizations operating in or serving customers in the European Union, the AI Act creates direct governance obligations. But even for organizations outside the EU, the Act is setting the de facto global standard for serious AI governance, and many multinationals are aligning their global practices with it.

ISO/IEC 42001 AI Management Systems Standard

Published in 2023, ISO/IEC 42001 is the first international standard specifically for AI management systems. It provides a structured framework for establishing, implementing, maintaining, and continuously improving AI governance within organizations.

Modeled on the structure of other ISO management system standards, such as ISO 27001 for information security, it offers organizations a certifiable framework for demonstrating AI governance maturity to customers, regulators, and partners.

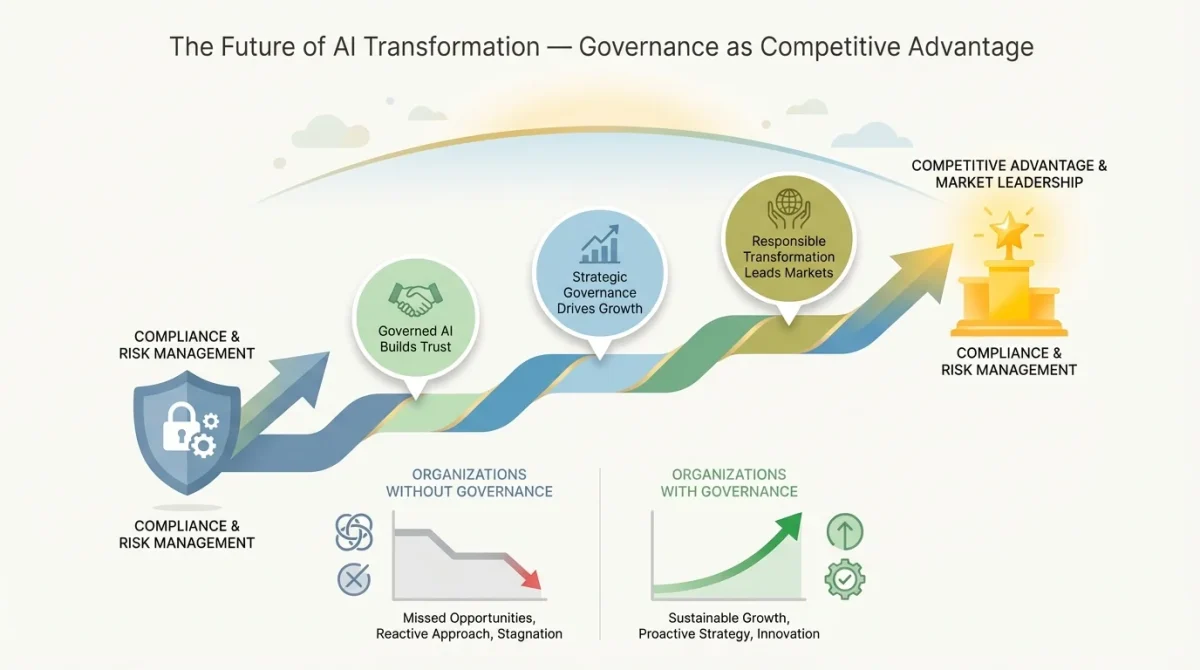

The Future of AI Transformation Governance

Why Organizations That Govern AI Well Will Lead

For the first decade of enterprise AI, governance was widely treated as a constraint, a compliance cost that slowed down innovation and added bureaucratic friction. That framing is changing rapidly.

Organizations that have invested seriously in AI governance are finding that it creates advantages that extend far beyond risk reduction. They can move faster in regulated industries because they have pre-built the compliance infrastructure. They can earn the trust of customers and partners that competitors cannot easily replicate. They can attract and retain AI talent that wants to work in environments where ethics and accountability are taken seriously.

In an era where AI incidents generate front-page coverage and regulatory scrutiny is intensifying globally, governance is becoming a market differentiator. The organizations leading AI transformation in the next decade will not be those that moved fastest. They will be the ones who build the most trustworthy AI ecosystems.

From Compliance to Strategic AI Governance

The most advanced organizations are moving beyond compliance-focused governance toward strategic AI governance, the active use of governance mechanisms to create business value rather than just manage risk.

Strategic AI governance means using transparency and explainability as selling points with customers who are increasingly asking how vendors’ AI systems work and how they are controlled. It means using governance frameworks to build AI partnerships and ecosystem relationships that competitors find difficult to replicate. It means treating AI accountability as a leadership capability rather than a legal department function.

This shift from defensive to strategic governance is still in its early stages, but the organizations making it are building durable competitive advantages.

What Responsible AI Transformation Looks Like in Practice

Responsible AI transformation does not mean slow AI transformation. It means AI transformation is built on foundations that do not have to be torn down and rebuilt when they fail under pressure.

It looks like governance conversations are happening alongside technology conversations, not after. It looks like business leaders understand AI governance well enough to ask the right questions of their technical teams. It looks like data scientists who see ethical oversight as part of their professional identity rather than an external imposition. It looks like boards of directors that treat AI governance as a fiduciary responsibility rather than a technical detail.

Most of all, it looks like organizations that recognize the truth that is now widely accepted by everyone who has watched enough AI transformations succeed and fail: the technology was never the hard part. Governance has always been the hard part.

Frequently Asked Questions (FAQs)

Is AI transformation mainly a technology or governance problem?

The evidence strongly suggests that AI transformation is primarily a governance problem. While technical capability is a necessary foundation, the most common causes of AI transformation failures, such as misaligned objectives, accountability gaps, bias and ethical oversights, regulatory non-compliance, and poor deployment decisions, are all governance failures rather than technical ones. Organizations with strong governance and average technology consistently outperform organizations with cutting-edge technology and weak governance.

What are the biggest governance mistakes companies make during AI transformation?

The most common governance mistakes include: launching AI initiatives without defined ownership and accountability structures; treating governance as a post-deployment compliance exercise rather than a design-phase requirement; failing to connect AI performance metrics to business outcomes; underinvesting in ongoing monitoring after deployment; and siloing governance responsibility in the legal or compliance team rather than embedding it across the organization.

How do you build an AI governance team from scratch?

Start with ownership clarity. Designate a senior executive sponsor for AI governance, ideally at the C-suite level, with clear authority and accountability. Then build a cross-functional working group that includes representatives from data science, legal, compliance, business operations, and human resources. Define a governance charter that establishes the team’s scope, decision-making authority, and escalation processes. Adopt or adapt an established framework, such as the NIST AI RMF or ISO 42001, as your structural foundation, then customize it to your organization’s risk profile and industry context.

What role does leadership play in AI governance?

Leadership is not just a support function for AI governance; it is the determining factor in whether governance works at all. When senior leaders treat AI governance as a genuine strategic priority rather than a regulatory necessity, the rest of the organization follows. When they model accountability, reward teams that identify AI problems, and make governance part of the organization’s identity around AI, governance becomes embedded in the culture rather than bolted onto the process. Conversely, when leaders deprioritize governance in favor of speed, governance frameworks become performative documents that no one follows.

Can small businesses implement AI governance frameworks?

Absolutely. AI governance does not require the resources of a large enterprise. Small businesses can implement lightweight governance appropriate to their scale and risk profile. The essential elements defined ownership, basic risk assessment before deployment, clear policies about data use, and a process for monitoring and addressing problems can be implemented without dedicated governance teams or expensive frameworks. The NIST AI RMF offers scalable guidance that organizations of any size can adopt. The key is to start governance conversations early, before AI systems are in production, rather than trying to retrofit governance onto systems that are already causing problems.