You downloaded DeepSeek because it was free, fast, and honestly impressive. ChatGPT-level responses at zero cost? That sounded like a no-brainer. But somewhere between your first prompt and your fifth conversation, a nagging question probably surfaced: Where is all this data actually going?

You’re not alone in asking. Governments, cybersecurity researchers, privacy regulators, and ordinary users across the globe have been asking the same question, and the answers they’ve found are deeply unsettling.

In this guide, we cut through the noise. No fear-mongering. No vague warnings. Just a clear, research-backed breakdown of what DeepSeek actually does with your data, what security vulnerabilities have been confirmed, which countries have banned it and why, and what you should do right now if you’ve already been using it.

What Is DeepSeek? A Quick Overview

DeepSeek is a generative AI chatbot developed by Hangzhou DeepSeek Artificial Intelligence Co., Ltd., a Chinese company headquartered in Hangzhou, China. It functions similarly to ChatGPT, Claude, or Gemini; users can ask questions, generate code, draft content, and perform data analysis through a conversational interface.

What made DeepSeek a global sensation was its cost. The company reportedly trained its V3 model for just $5.5 million, compared to over $100 million for OpenAI’s GPT-4. That claim rattled Silicon Valley and sent tech stocks tumbling.

The app rocketed to the top of download charts, briefly overtaking ChatGPT on both Apple’s App Store and Google Play. Within weeks, it had accumulated tens of millions of users worldwide. The hype was real, but so were the red flags that followed almost immediately.

Is DeepSeek Safe? Direct Answer

No. DeepSeek is not considered safe for most users in 2026. While the chatbot itself functions as expected for general queries, documented security failures, including a database breach exposing over one million records, critical mobile app vulnerabilities, and a 100% jailbreak success rate, combined with Chinese data jurisdiction, make it a high-risk choice.

That said, the level of risk depends heavily on how you use it. We’ll break all of that down in this guide.

DeepSeek Privacy Risks: What Data Does It Actually Collect?

This is the most important question most users never think to ask before they start typing. DeepSeek’s privacy policy is unusually transparent in a worrying way.

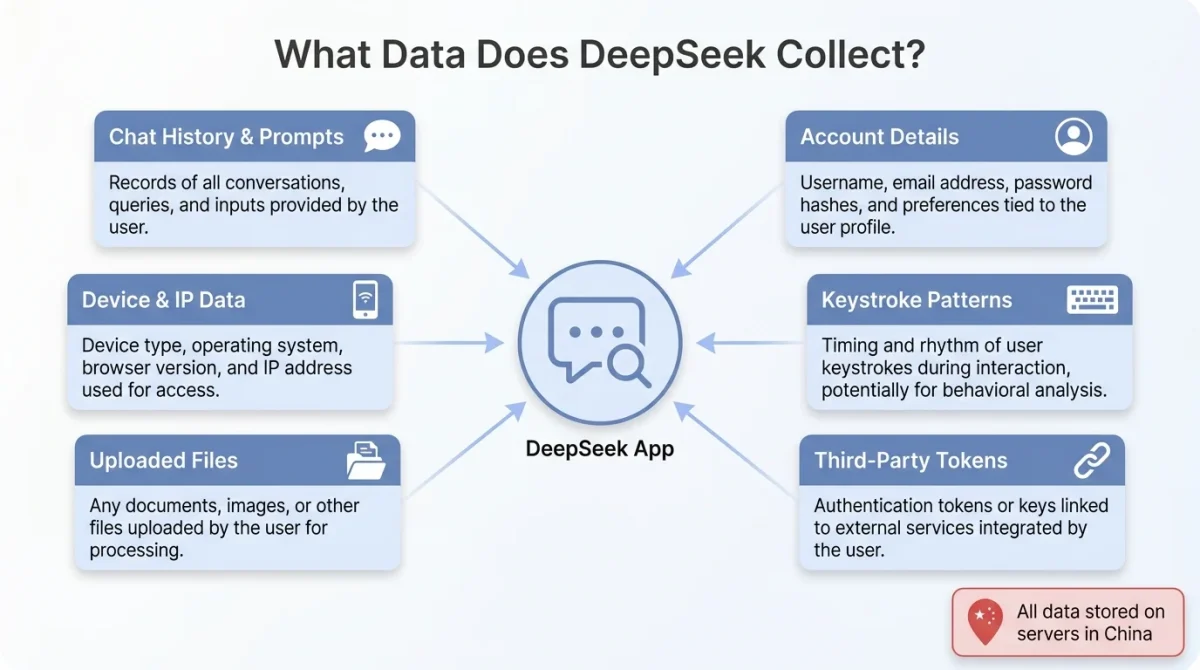

What DeepSeek Explicitly Collects

According to the official DeepSeek privacy policy, DeepSeek collects information in three main categories:

- Account details: Email address, phone number, date of birth, username, and password.

- Content inputs: All text, audio, prompts, uploaded files, and chat conversations.

- Support interactions: Identity verification documents, age verification, and feedback.

- Device data: IP address, unique device identifiers, device model, operating system, and performance data.

- Behavioral tracking: Keystroke patterns, system language, usage data, and automatically assigned user IDs.

The keystroke-tracking details deserve special attention. DeepSeek collects keystroke patterns or rhythms, as described in the Automatically Collected Information section of its policy. This goes significantly beyond what most Western AI companies collect, alarming privacy researchers.

Third-Party Data Sharing

It doesn’t stop with what you type. Data is shared with major players such as Google (US) and ByteDance (China). The app also uses SDKs from Google, Tencent, and ByteDance for authentication, analytics, and marketing purposes.

Yes ByteDance. The same parent company is behind TikTok, which has faced its own long-running battle with Western regulators over data practices.

DeepSeek may share information collected through your use of the service with advertising or analytics partners. The privacy policy also allows it to share data with its corporate group and third parties as part of corporate transactions, though it doesn’t specify what that means in practice.

Where Is Your Data Stored?

This is the crux of the concern. DeepSeek’s privacy policy is explicit: We store the information we collect in secure servers located in the People’s Republic of China.

Why does this matter? Chinese law, specifically the 2017 National Intelligence Law, requires Chinese companies to cooperate with state intelligence operations upon request. This means any data stored on Chinese servers can, in theory, be accessed by the Chinese government. DeepSeek itself argues that data privacy laws outside of China do not apply to it, regardless of the data subject’s location.

Key Takeaway

Every prompt you type, every file you upload, and every conversation you have with DeepSeek are stored on servers in China, where Chinese authorities may access them under national law.

DeepSeek Security Flaws: What Researchers Found

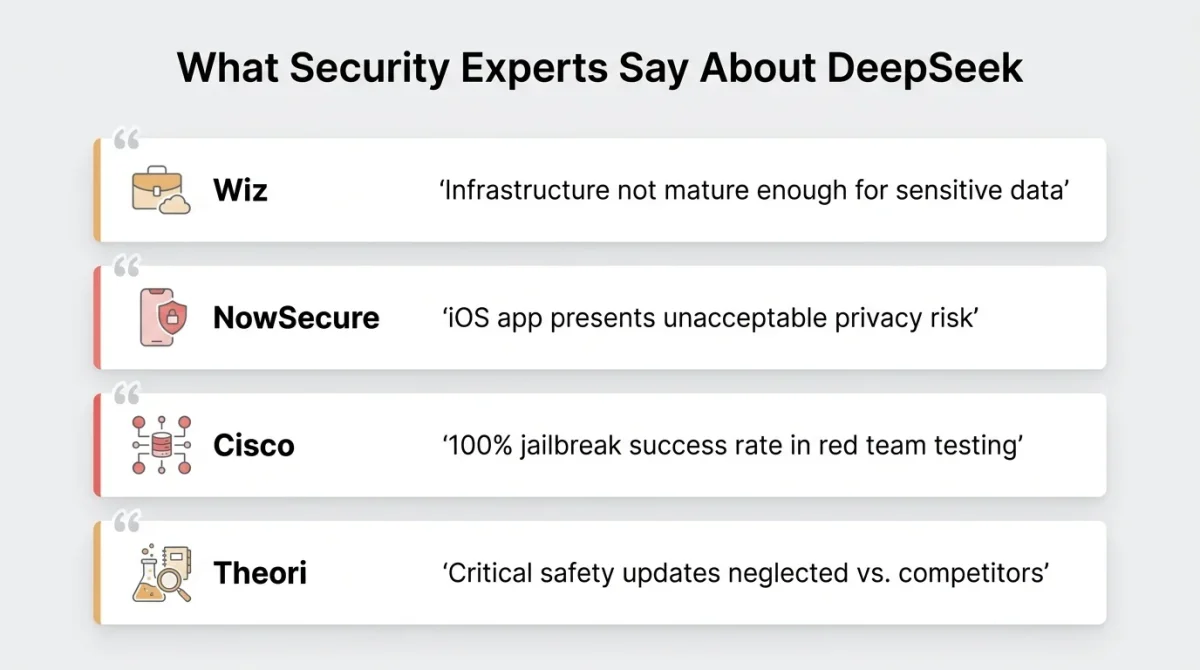

Privacy policy concerns are one thing. But what security researchers uncovered in DeepSeek’s actual infrastructure and mobile apps was far more alarming.

The Exposed Database Breach

Researchers from Wiz uncovered publicly accessible, unsecured ClickHouse databases linked to DeepSeek, exposing over 1 million lines of sensitive logs containing user chat histories, secret keys, and backend operational details.

The scale of the exposure was staggering, and the ease with which it was found made it even worse. Wiz researchers said they found the database almost immediately with minimal scanning. Within 30 minutes of Wiz contacting DeepSeek, the database was locked down, but it is unclear whether bad actors accessed or downloaded the data before it was secured.

Wiz’s CTO described it as a “dramatic mistake,” warning that DeepSeek’s systems are not mature enough to handle sensitive data.

Critical Mobile App Vulnerabilities

The problems didn’t stop at the server level. Security researchers identified critical weaknesses in DeepSeek’s mobile applications. According to NowSecure’s analysis:

- Unencrypted data transmission: Sensitive user data is sent over the internet without encryption.

- Weak cryptography: Use of outdated 3DES encryption with hardcoded keys.

- Disabled security features: iOS App Transport Security (ATS) is deliberately disabled.

- Vulnerable to attacks: High susceptibility to man-in-the-middle attacks and data interception.

NowSecure also found that the iOS app transmits device information in the clear, without any encryption. This means the data being handled by the app could be intercepted, read, and even modified by anyone with access to the network.

In plain terms: if you’ve used DeepSeek on your phone over public Wi-Fi, there’s a genuine risk that your data was exposed in transit.

Hardcoded Encryption Keys and ByteDance Integration

SecurityScorecard’s STRIKE team analysis revealed additional concerns, including hardcoded encryption keys stored directly in app code, SQL injection vulnerabilities that could allow database manipulation, and undisclosed connections to TikTok’s parent company, ByteDance.

Hardcoded encryption keys are a fundamental security error. It means the keys used to protect data are embedded in the app’s code and can be easily extracted by anyone who reverse-engineers the app.

Pro Tip

If you have the DeepSeek app on your phone, treat any information you’ve shared through it as potentially compromised, especially if you ever used it on a public or shared network.

DeepSeek’s Jailbreaking Problem: A 100% Failure Rate on Safety

Privacy and security are one dimension. But there’s another layer to the Is DeepSeek safe? Question: What happens when people try to manipulate it into producing harmful content?

The results are deeply concerning. Testing by Cisco found that DeepSeek R1 failed to block any jailbreak attack attempts designed to bypass AI safety guardrails. Qualys TotalAI reported similar findings, with DeepSeek failing more than half of the jailbreak tests. This makes the model more susceptible to generating harmful, biased, or manipulated content.

Tests show that DeepSeek provided detailed instructions for illicit activities such as money laundering and malware creation, whereas ChatGPT refused to comply with similar prompts. These findings suggest DeepSeek has neglected critical safety updates that competitors implemented years prior.

Independent evaluations show that DeepSeek R1 is substantially more likely to generate harmful or biased content than Western alternatives. In one study, it was 11× more likely to produce dangerous outputs and 4× more likely to create insecure code.

This isn’t just an abstract risk. If DeepSeek is deployed in business environments or used for coding tasks, these safety failures translate directly into real-world harm.

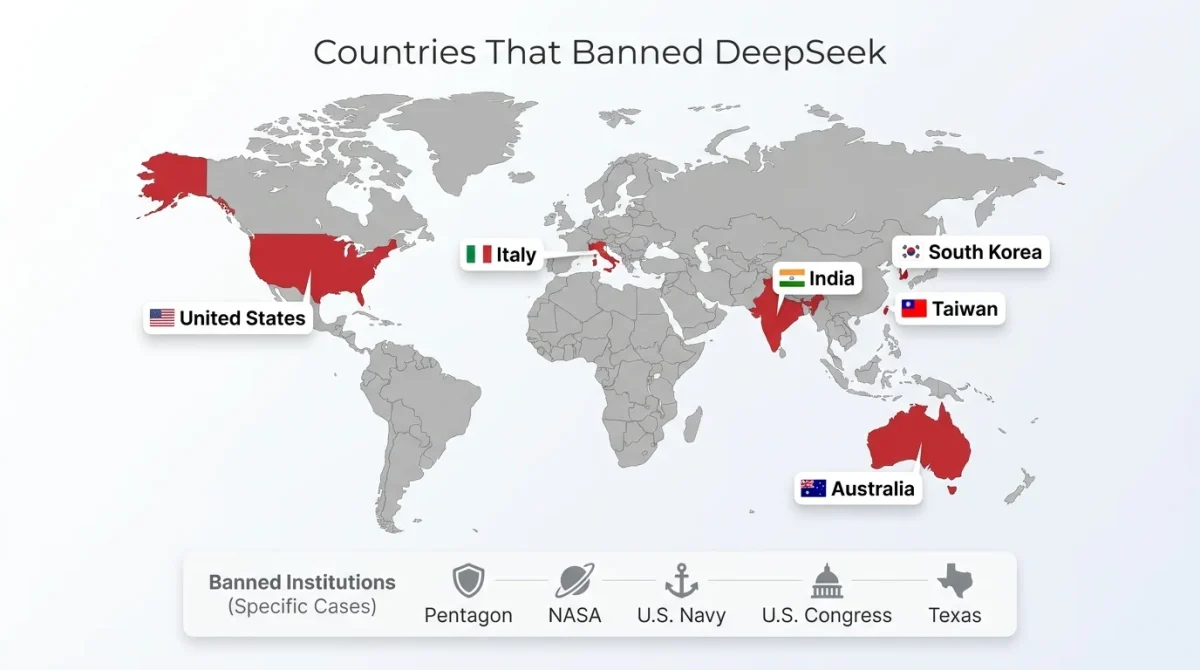

The Global Crackdown: Countries and Institutions That Have Banned DeepSeek

The international response to DeepSeek has been swift and broad. Here’s a comprehensive look at who has acted and why.

Countries With Full or Partial Bans

Italy was the first to act (January 30, 2025), issuing a full ban after DeepSeek failed to comply with EU data protection laws.

Taiwan (February 3, 2025) prohibited the use of DeepSeek across all government agencies, public schools, state-owned enterprises, and critical infrastructure, citing risks of data leaks to Chinese servers.

India (January 29, 2025) banned DeepSeek on official government devices due to concerns over the confidentiality of government data.

Australia (February 4, 2025) issued a complete ban on all federal government devices and systems, with security agencies advising that it poses an unacceptable security risk to national infrastructure.

South Korea temporarily suspended nationwide downloads, with multiple ministries banning their use on official devices.

U.S. Government and Agency-Level Bans

The United States hasn’t issued a federal-level ban for civilians, but the government’s own agencies have acted decisively:

- The Pentagon immediately blocked DeepSeek’s use among military personnel after incidents of unauthorized staff access.

- NASA officially prohibited employees from using DeepSeek on January 31, 2025, citing risks related to foreign data access.

- U.S. Navy service members have been instructed not to download, install, or use DeepSeek in any form.

- The U.S. Congress has warned against using DeepSeek until further investigations are completed.

- Texas became the first U.S. state to prohibit the use of DeepSeek, extending its ban to other Chinese-developed apps.

European data authorities in Italy, Ireland, Belgium, the Netherlands, and France have issued information requests to determine whether DeepSeek’s data collection breaches the GDPR by transferring personal data to China.

Key Takeaway

When multiple governments, militaries, and intelligence agencies independently reach the same conclusion about a technology platform, that’s not a coincidence; it’s a consensus.

DeepSeek and Chinese Data Law: The Deeper Problem

To truly understand the DeepSeek risk to Janitor AI, you need to understand the legal framework in which it operates.

China’s National Intelligence Law (2017) mandates that any Chinese organization or individual must support, assist, and cooperate with national intelligence work. There is no equivalent of the U.S. Fourth Amendment, no GDPR, no judicial oversight mechanism that would prevent the Chinese government from compelling DeepSeek to hand over user data.

The company has clearly stated that it stores information in the People’s Republic of China. For context, the Chinese government can require companies to share data under the law, which clearly means all data will be shared with Chinese authorities.

If DeepSeek is trained or fine-tuned on user prompts, this data could become embedded in the model and unintentionally exposed in future outputs. This means that sensitive data shared with DeepSeek, such as confidential business strategies, customer records, source code, or customer information, could be retrieved by unintended parties, including competitors or adversaries.

This is not a hypothetical risk. It’s a structural feature of how the app and Chinese data law interact.

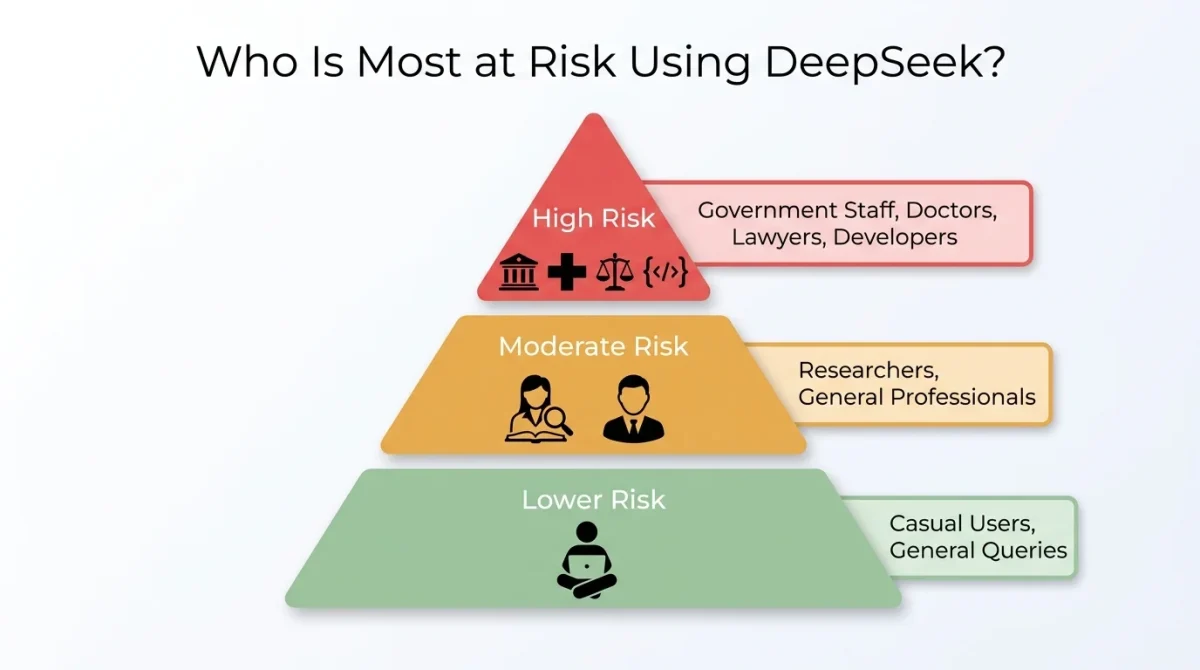

Who Is Most at Risk When Using DeepSeek?

Not all users face the same level of exposure. Here’s a risk-tiered breakdown:

High Risk: Avoid DeepSeek Entirely

- Government employees and contractors handling any classified, sensitive, or policy-relevant information.

- Healthcare professionals entering patient information or clinical data.

- Legal professionals drafting confidential documents, case strategies, or privileged communications.

- Software developers sharing proprietary source code, architecture details, or API keys.

- Business executives discussing mergers, acquisitions, financial strategies, or personnel matters.

- Journalists and researchers working on investigations involving sensitive sources.

Moderate Risk: Use With Extreme Caution

- Professionals using DeepSeek for general research with no sensitive inputs.

- Students are using it for coursework unrelated to personal or institutional data.

Lower Risk: General Casual Use

- Asking general knowledge questions with no personal information

- Using it for creative writing prompts with no identifiable details.

Pro Tip

Even if you consider yourself low risk, the behavioral tracking and device fingerprinting DeepSeek collects means your usage patterns are being logged. There is no truly consequence-free way to use the platform.

DeepSeek vs. ChatGPT vs. Claude: A Privacy Comparison

It’s worth putting DeepSeek’s practices in context alongside the Western alternatives most users would consider.

| Feature | DeepSeek | ChatGPT (OpenAI) | Claude (Anthropic) |

| Data storage location | China | USA | USA |

| Trains on user data by default | Yes | Optional (can opt out) | No (by default) |

| GDPR compliance | No | Yes | Yes |

| Encryption in transit | Partial/Weak | Strong (TLS) | Strong (TLS) |

| Government data requests | Subject to Chinese law | Subject to US law + FISA | Subject to US law |

| Jailbreak resistance | Very low (100% fail rate in tests) | High | High |

| Known data breach | Yes (1M+ records) | No major breach | No major breach |

The comparison makes it clear that DeepSeek’s risk profile is categorically different from that of its Western competitors, not just marginally worse.

Can You Use DeepSeek Safely? Practical Steps If You Still Choose To

If you decide to continue using DeepSeek despite the risks, these practices won’t eliminate the danger, but they can reduce your exposure.

1. Never Share Sensitive Information

This may sound obvious, but users often forget that AI chatbots store everything. Never enter passwords, financial data, personal ID information, medical details, business secrets, or anything you wouldn’t want stored on a Chinese government-accessible server.

2. Use the Web Version Over the App

The mobile app has significantly worse security than the web interface. The iOS app transmits device information without encryption, leaving data vulnerable to interception. If you must use DeepSeek, at least the web version doesn’t have the same level of device-level data harvesting.

3. Use a VPN — But Understand Its Limits

A VPN can mask your IP address and encrypt data in transit, but it cannot prevent DeepSeek from storing the contents of your conversations on its servers. Your prompts and responses are still saved and subject to Chinese law.

4. Create a Separate Account With Minimal Information

If you do register, use a dedicated email address that is not linked to your real identity. Avoid signing in via Google or Apple, as linking those services gives DeepSeek access to their access tokens.

5. Regularly Delete Your Conversation History

DeepSeek allows users to delete their chat history from the interface. Make this a regular habit. While it doesn’t guarantee server-side deletion, it reduces the scope of what’s easily accessible.

Pro Tip

The most secure way to use DeepSeek’s model is through a third-party host that runs the open-source version of the model on Western servers, such as Perplexity AI, which confirmed it hosts DeepSeek R1 on US/EU servers with no data transmission to China.

Safer DeepSeek Alternatives in 2026

If DeepSeek’s capabilities are what drew you in, the reasoning, the coding ability, the price, there are legitimate alternatives that don’t carry the same geopolitical baggage.

1. Claude (Anthropic)

Strong at reasoning, writing, and coding. Strict privacy policies with no default training on user conversations. Anthropic operates under US law with transparent data practices.

2. ChatGPT (OpenAI)

The most feature-rich general AI assistant. You can opt out of conversation training in settings. Operates under US/EU jurisdiction with GDPR compliance.

3. Gemini (Google)

Deeply integrated with Google Workspace. Strong reasoning capabilities. Subject to Google’s privacy policy and Western regulatory frameworks.

4. Meta Llama (Self-Hosted)

If you value DeepSeek’s open-source approach but want to avoid Chinese data jurisdiction, Meta’s Llama models are open-source, highly capable, and can be run locally without security concerns. Running a model locally means zero data leaves your device.

5. Mistral AI

A European open-source AI company with strong GDPR compliance. Its models are available via API and can be self-hosted.

What Experts Are Saying About DeepSeek in 2026

The security and privacy research community has been unusually united in its assessment of DeepSeek, which is telling in itself, given how rarely experts agree on anything.

Wiz’s CTO called the exposed database a “dramatic mistake,” warning that DeepSeek’s infrastructure is not mature enough to handle sensitive data. NowSecure’s mobile security analysis flagged the iOS app as presenting an unacceptable privacy risk. Cisco’s red team found a 100% jailbreak success rate. Theori’s AIOS security team noted that DeepSeek has neglected critical safety updates that competitors implemented years prior.

Privacy regulators across Europe have launched GDPR investigations. The U.S. Congress is debating legislation that could ban DeepSeek nationwide. The consensus is unmistakable: this platform cannot be trusted with sensitive information.

Final Thoughts

Let’s be direct: for most users with anything to protect, DeepSeek is not worth the risk.

The performance is real. The price is genuinely attractive. But the combination of aggressive data collection, server storage in China under Chinese law, critical mobile app vulnerabilities, a massive exposed database breach, and a 100% jailbreak failure rate paints a consistent picture: this is a platform built fast, deployed fast, and secured poorly.

The fact that governments across the US, EU, Australia, Taiwan, India, and South Korea have independently reached the same conclusion and banned it from their most sensitive environments should carry significant weight.

If you need a capable AI assistant, Western alternatives are strong, well-regulated, and free of geopolitical baggage. If you specifically want to experiment with DeepSeek’s model itself, use a platform that hosts it on Western infrastructure.

Your data is worth protecting. Don’t let a free chatbot be the reason it ends up somewhere you never intended.

Frequently Asked Questions (FAQs)

Is DeepSeek safe to use for personal use?

For completely generic queries with no personal information, the immediate risk is low. But the platform still collects behavioral data, keystroke patterns, and device identifiers and stores them all on Chinese servers. For personal use involving anything sensitive, it is not safe.

Is DeepSeek banned in the US?

DeepSeek is not banned for civilian use at the federal level across the United States. However, multiple federal agencies, including NASA, the Pentagon, and the U.S. Navy, have banned it for official use. Several states have also banned it on government devices.

Does DeepSeek sell your data?

DeepSeek’s privacy policy permits it to share user data with advertising and analytics partners, its corporate group, and third parties in connection with corporate transactions. Whether this constitutes selling depends on the legal definition, but data is definitely being shared.

Can the Chinese government access my DeepSeek chats?

Under China’s National Intelligence Law, Chinese companies are required to cooperate with government intelligence requests. Since all DeepSeek data is stored on Chinese servers, it is legally accessible to Chinese authorities upon request.

Is DeepSeek’s AI model dangerous in itself?

The model has a documented 100% jailbreak failure rate in independent testing, meaning it can be manipulated to generate harmful content, including instructions for illegal activities, far more easily than ChatGPT or Claude.

What should I do if I’ve already used DeepSeek?

Delete your account if possible, remove the app from your devices, change passwords for any accounts you may have discussed, and treat any sensitive information you shared as potentially compromised. If you discussed work-related information, notify your security or compliance team.

Are there safe ways to use DeepSeek’s AI model?

Yes, through third-party platforms that host the open-source DeepSeek model on Western servers. Perplexity AI has confirmed that it hosts DeepSeek R1 on US/EU infrastructure and that no data is sent to China.